Over 700 million people worldwide use the Telegram app. What sets it apart from other messaging apps is its open-source nature, which means that its code is freely available to the public. It has allowed Telegram to attract a vast community of contributors who help to improve the app and add new features regularly.

The Product Science team is proud to be part of this community, and we are passionate about contributing to Telegram's success. We recognize the value of open-source technology and its opportunities for collaboration and innovation. As such, we use the PS tool to enhance the app and provide maximum value to users.

Recently, Telegram app has introduced a Real-Time translation that enables users to translate messages and captions in any language. Our team is multi-lingual and we were super excited to use the new feature. With real-time translation, users can leave the language barrier behind and communicate with people from all over the world at ease. However, we noticed that it was a bit slow, so we dug into the code to see what was up.

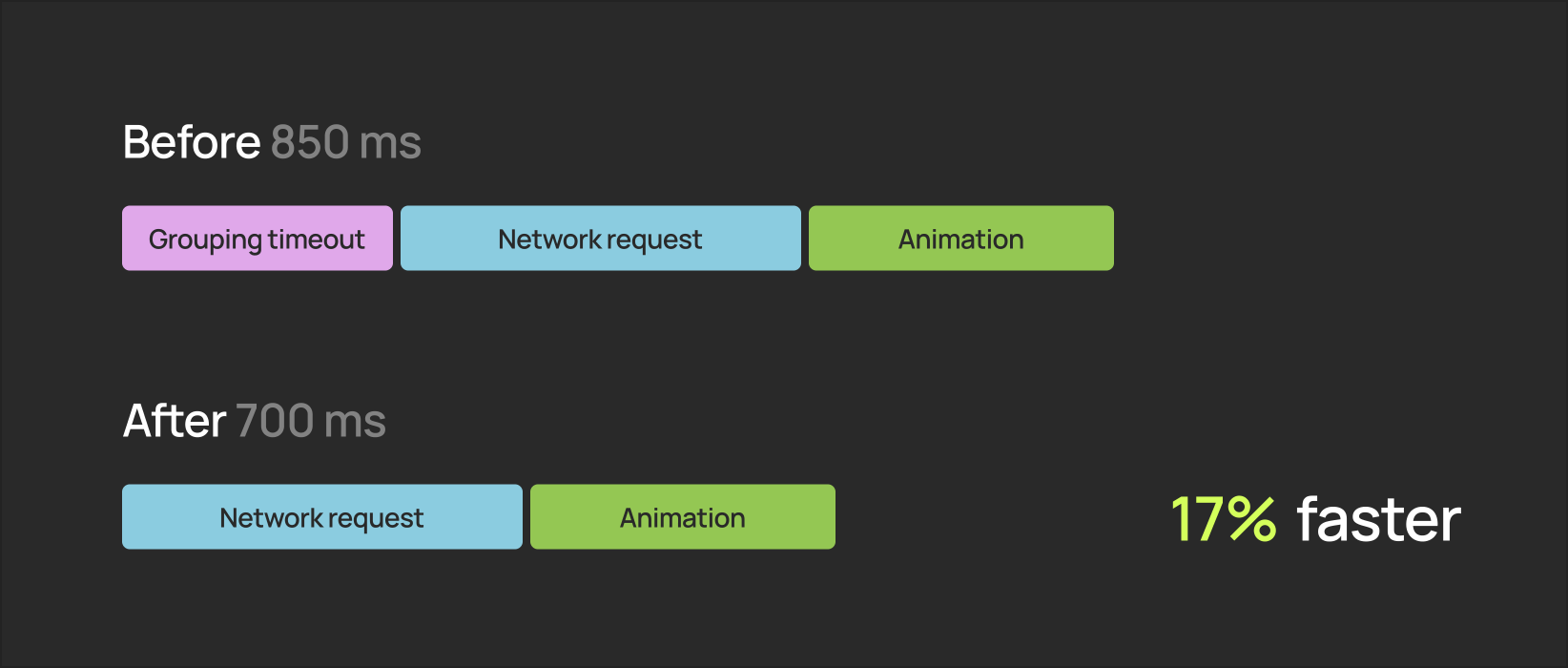

In this article, we will discuss how we utilized AI-powered instrumentation to uncover a potentially problematic coding decision within the Real-time Translation feature and unlocked the optimization opportunity of at least 17%.

The importance of translation flow for Telegram’s users

Sluggish performance can be frustrating – especially when your users rely on the translation feature to have a smooth conversation flow or even at time of emergency.

Improving the performance of the tгranslation feature can help reduce language barriers, enable more meaningful interactions, and foster a greater sense of community among Telegram users. Additionally, as more people use the app globally, having a reliable translation feature is essential to ensure that users can communicate effectively with each other regardless of language differences. The importance of this translation feature to users inspired Product Science to identify performance opportunities.

So what is this Real-Time Translation on Telegram?

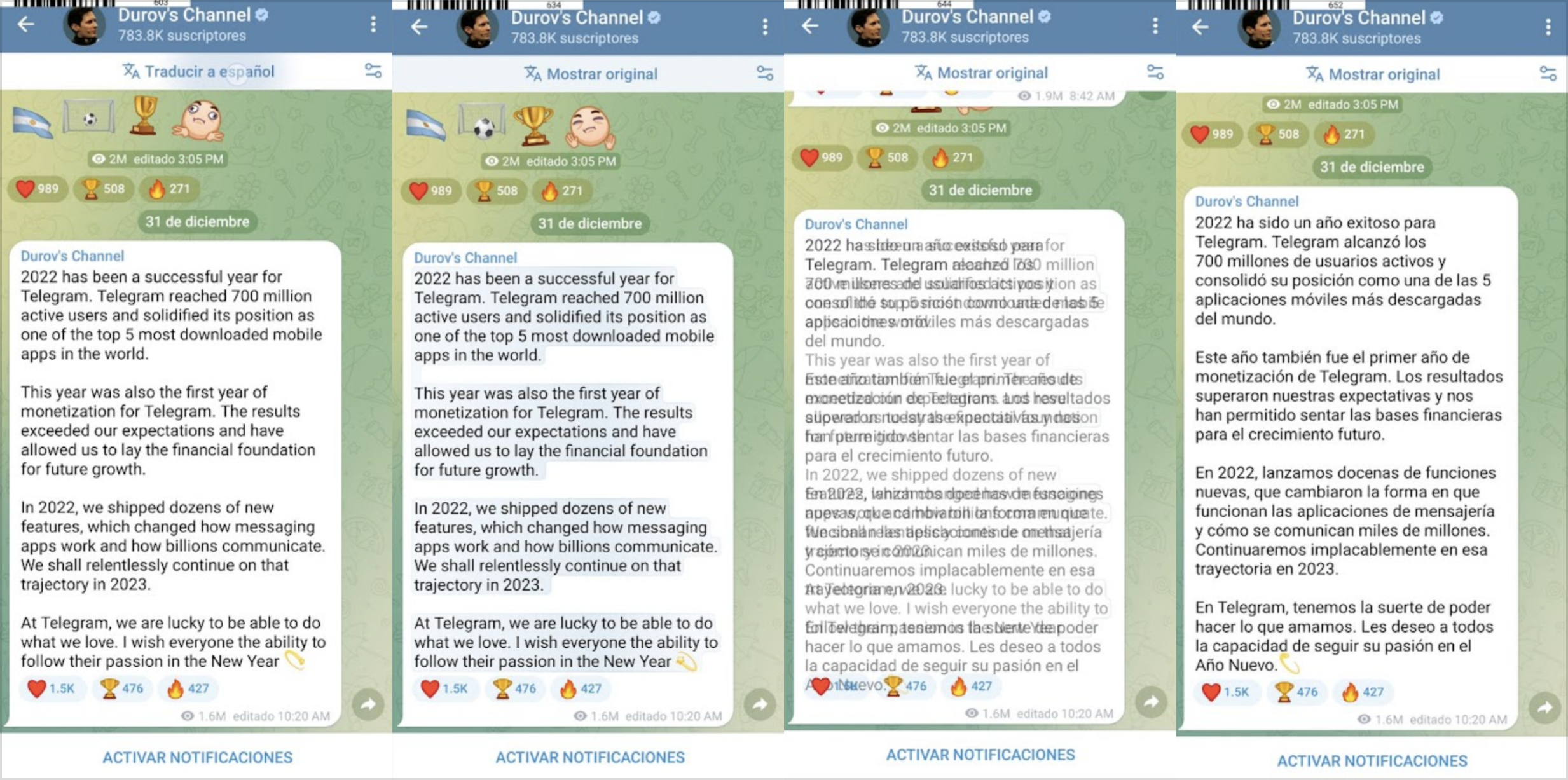

The translation feature starts from the moment users tap the ‘Translate Button to the moment when the page is fully translated. (See Pic 1)

In this example, we can see a significant 850 ms delay from the moment the user taps the ‘Translate’ button to the moment when translation is displayed. The tests were made on a mid-end Android device, Galaxy A52, to represent the most common user case. The experience for the users of less powerful phones would be much worse.

Identifying performance opportunities with PS Tool

Previously, to investigate a performance issue would require hours of manual instrumentation and different softwares to put different pieces of puzzles together and we might be able to identify the root cause.

With Product Science, our automated instrumentation provides you visibility to the smallest funcitons or libraries that you would have never imagined that can have such a huge impact on performance. In addition, you can easily utilize the AI generated execution path to backtrack what happened to the user in real life and identify the biggest opportunity for optimization.

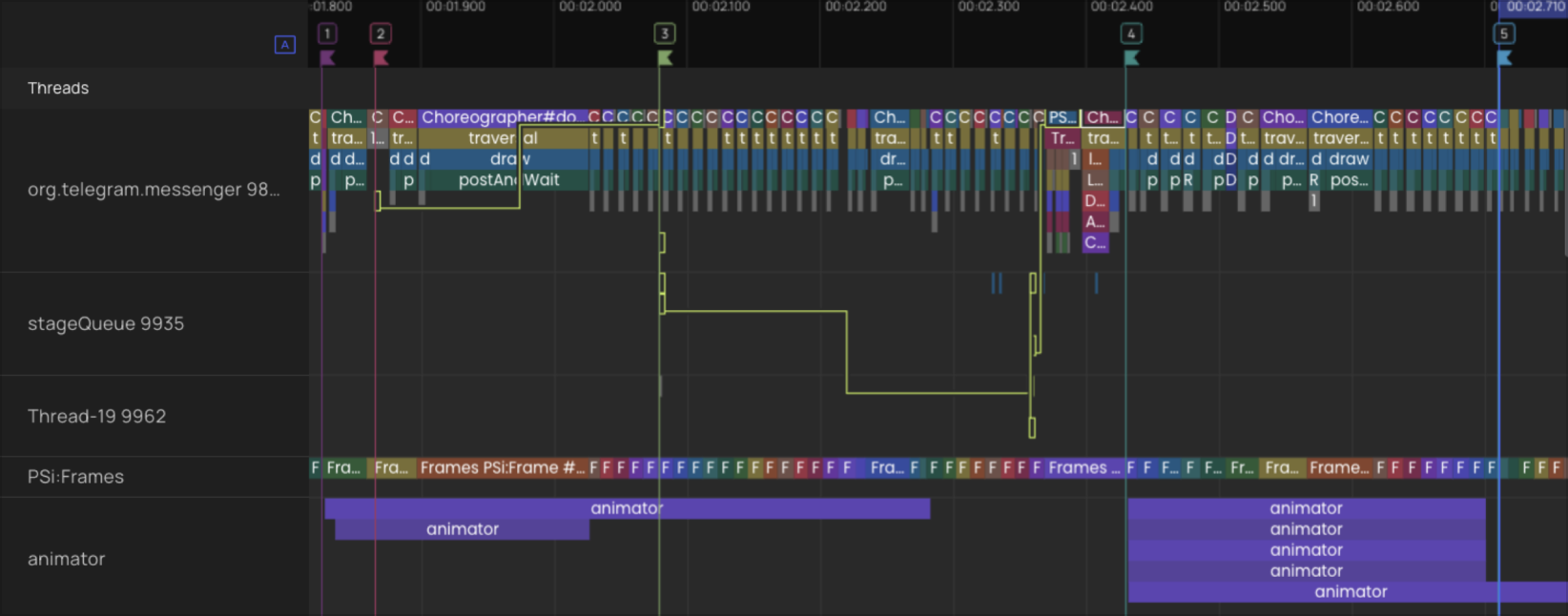

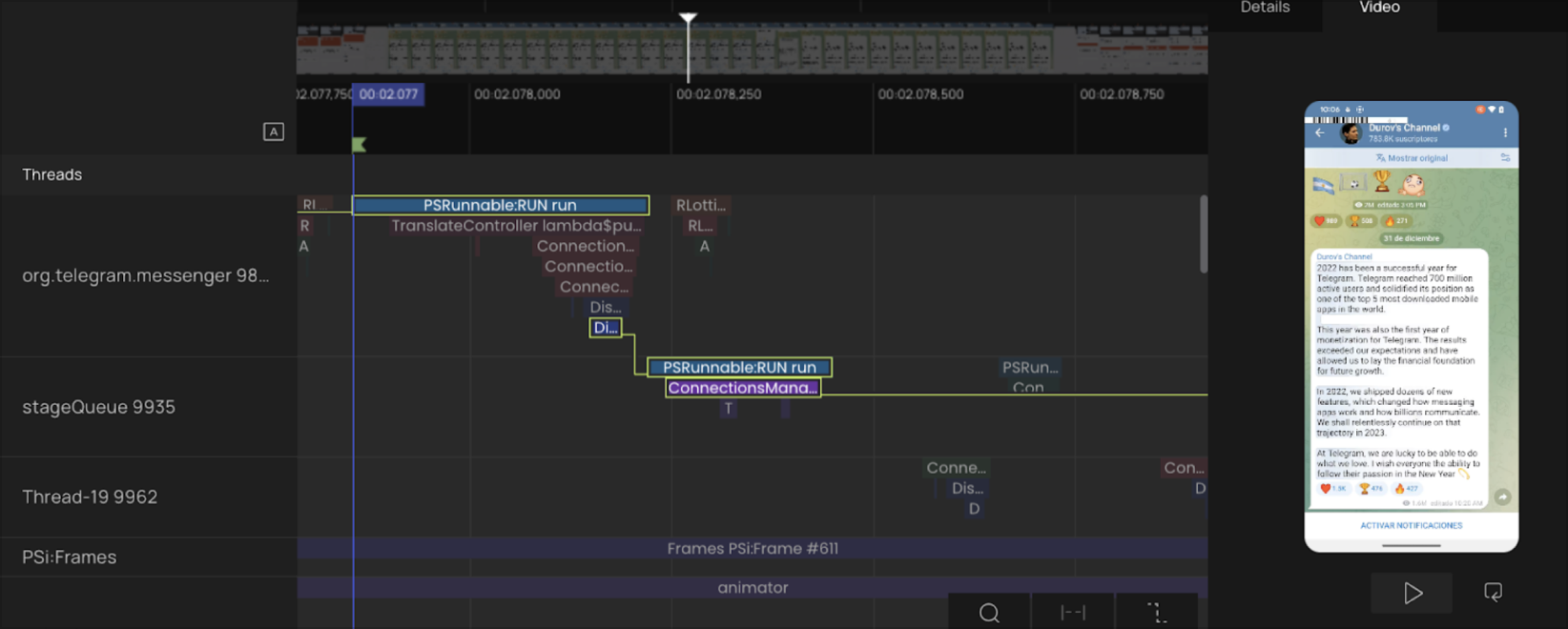

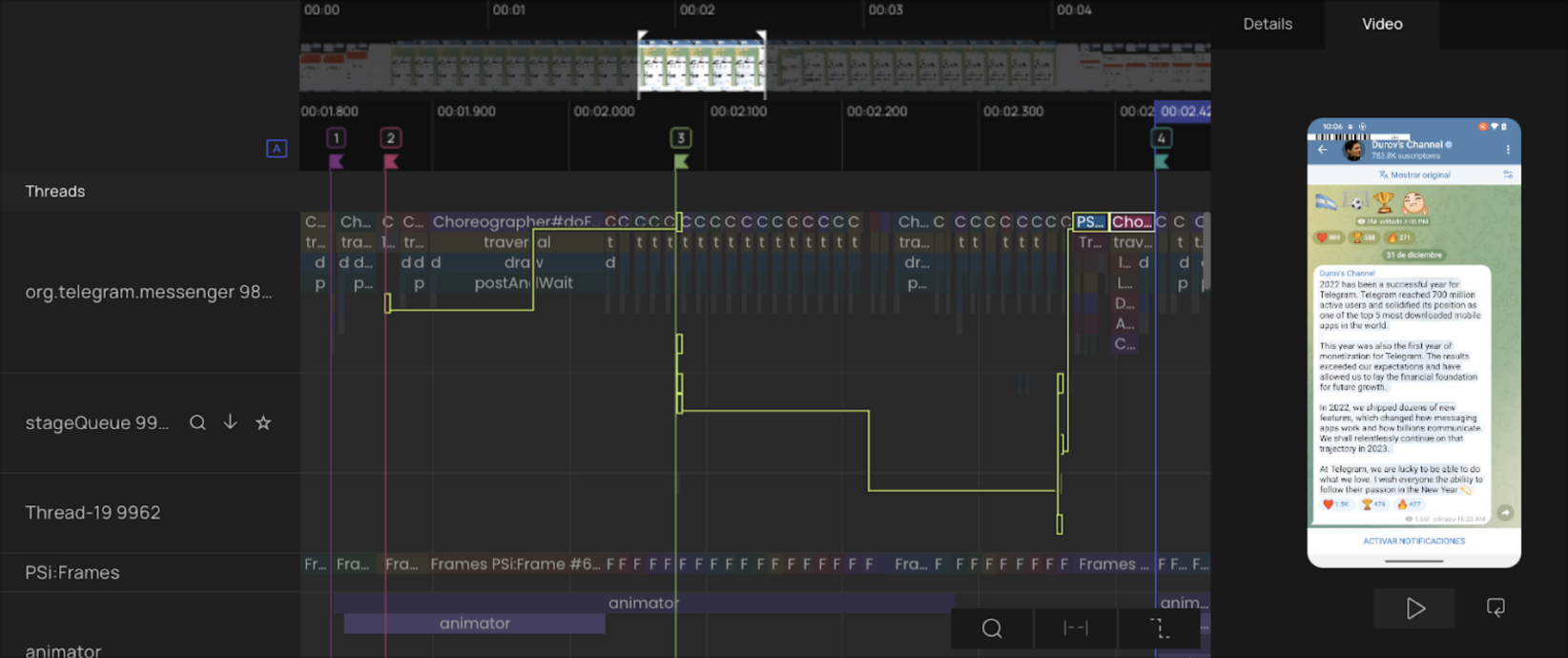

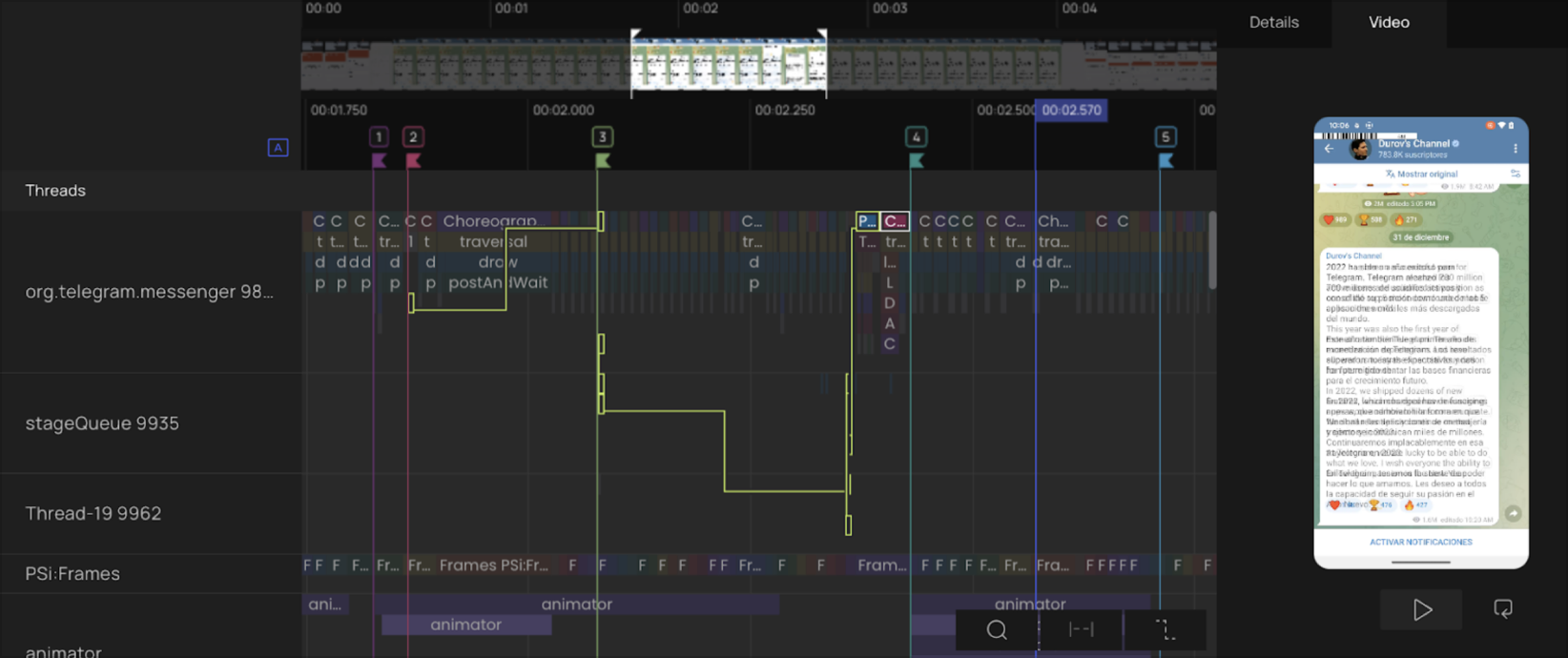

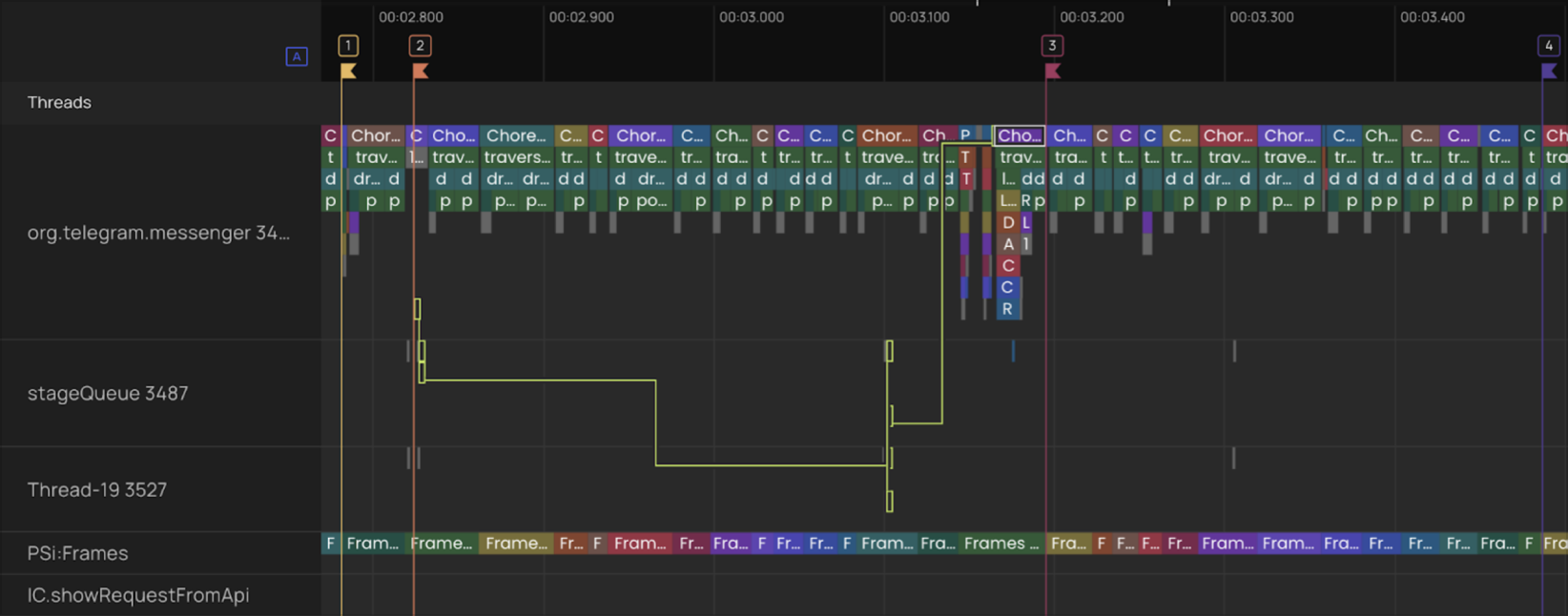

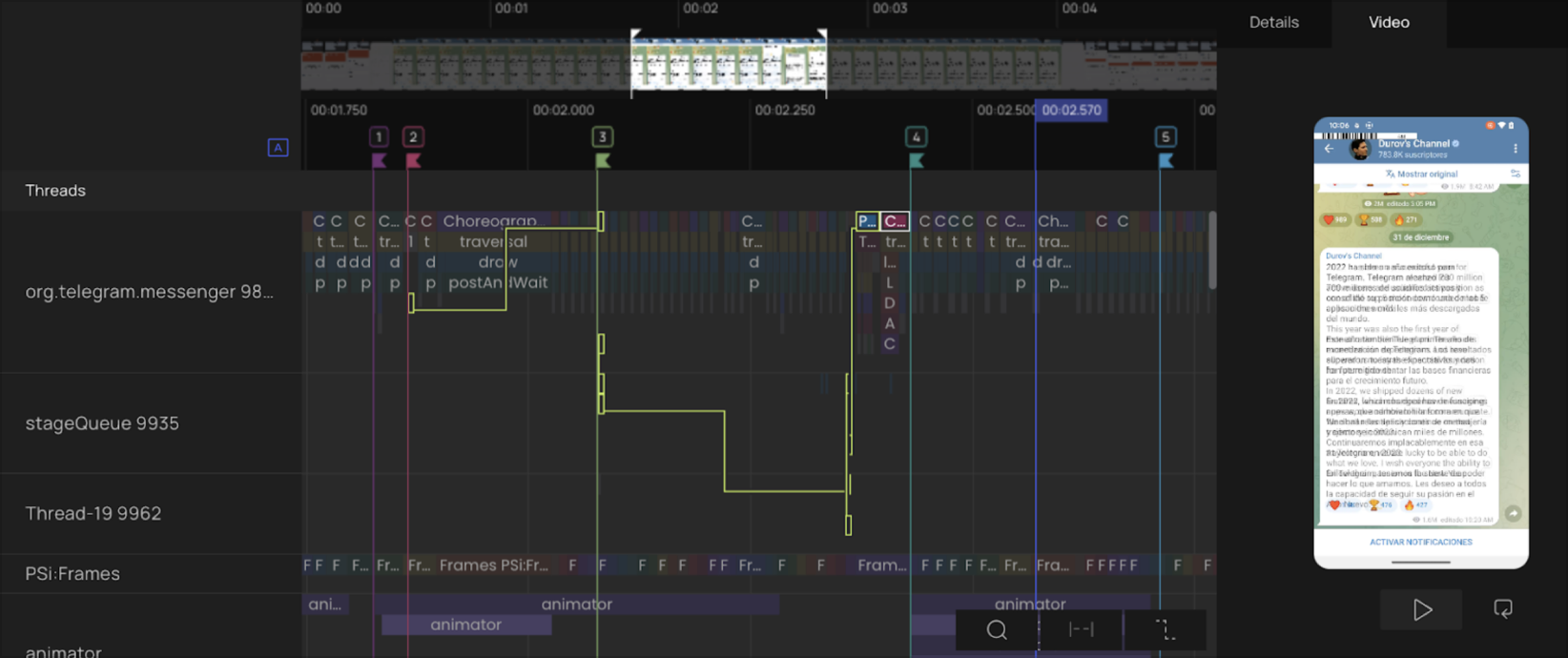

Here’s an example of an execution path (highlighted in yellow).

Before Fix

It shows you all the exact sequence of functions executed in multiple threads from the moment the user taps the “translate” button to when the translation is completed and being displayed. It also filters out all the functions and threads that didn’t participate in the execution of this particular user command and didn’t create a bottleneck.

What does the PS Tool detect about the Real-Time Translation in Telegram?

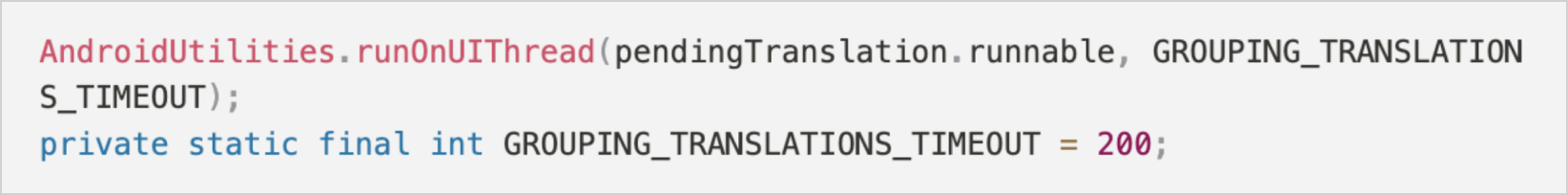

By utilizing the video synchronization feature of PS Tool, we detected a noteworthy delay in the screen recording of the initial build. We swiftly pinpointed the corresponding code and examined the crucial execution path. Our investigation revealed that the 200 ms delay between the user tapping the Translate button and the beginning of the translation process was hard-coded.

After a Translate button was pressed, the function waited until the end of the timeout to push to translate. GROUPING_TRANSLATIONS_TIMEOUT

The last part of the execution path (between flag 4 and 5) is the animation for transition from original language to chosen one.

Performance-oriented programming

Hardcoded delays in the frontend of mobile apps are usually worrisome. Such parts of the legacy code are hard to get rid of even when they're no longer necessary. As the naming hinted, and as was later confirmed by the Telegram team, this timeout existed to give some time to merge several messages into a single request, so that the server would receive <20 messages at once instead of sending each message text separately. A hardcoded timeout used for this purpose created a potential threat of nearly ANR experience for users, as 200ms can pretty quickly become seconds when scrolling.

Even without knowing these details with the Product Science tool, we were able to try out how a branch without the timeout would function and tested it right away. We rescheduled the network request for the TL_messages_translateText call to an earlier time and tested it out thoroughly to make sure the fix doesn’t affect the functionality. It was confirmed that on the trace recorded with the after-fix build, the function responsible for scheduling the network request started almost immediately after the user’s click, while the functionality remained intact.

Test Results

The improvement was noticeable - 200 ms time out (area between flags 2 & 3, pic2) is no longer part of the execution path and isn’t delaying the moment the user will see translated results.

Optimization opportunities revealed by the Telegram team

The visibility into the code execution can be achieved so easily with the PS tool that the engineers can unlock the optimization opportunities on the go. Soon after we submitted our findings and the test results to the repository, the Telegram team came up with some great ideas for optimization:

a) reducing this delay of the next wait time,

b) sending a request anyway when the pending translation is already full of 20 messages/25k symbols.

Soon after the Telegram team made an update. We are thrilled that our case study has provided valuable insights into improving the innovative feature.

Conclusion

Mobile app performance engineering is at an industry inflection point, and our cutting-edge PS Tool is at the forefront of this evolution. We’re driven to help developers advance beyond legacy performance analysis. Gone are the days of digging through piles of stack traces and logs.

If you're interested in contributing to the development of the Telegram app and are curious about using the Product Science instrumentation and profiler, please get in touch with us.

To learn more about PS Tool, discover how Product Science is reinventing the way developers work.