At Product Science, we care a lot about mobile app performance. Our tools help to easily identify weak spots in the code and improve them, such as app startup time, the sequence of calls, requests duplication, and much more. This performance optimization process has several exciting side effects along the way. One of them is reduced battery consumption.

In this article, we’ll explore the various factors that contribute to battery consumption in mobile apps and discuss how developers can reduce battery drain and make users even happier. The primary focus of this article is on Android OS and devices, but most of the information is applicable to all platforms.

Before we begin, let's agree that the quality of life has improved greatly since the era of smartphones. It’s easy to navigate anywhere with a map application, to contact each other with a video call or message at any time, and to entertain ourselves by streaming a movie while on a train or even an airplane. However, at the same time, we’ve become dependent on our smartphone batteries and there have been no scientific breakthroughs in this field as of yet.

Table of contents

Consumption scenarios and baseline numbers

- Sources behind the numbers

- Suspended and idle states

- Games

- Video

- Audio

- Calls and text messages

- Web browsing and email

- Camera

- Network

- Sensors

- GPS

- Bluetooth

- Max power

- Summary

- Strategy

- Caching

- Changes only

- Batching

- Media strategy

- Push notifications

- Background restrictions

- Wakelocks

- Power changes

- Summary

- How OS measures battery level

- How OS measures battery consumption by application

- How to measure battery consumption for your app

- Android battery optimizations history

Consumption scenarios and baseline numbers

To discuss power consumption, we should align on some baseline data and scenarios. First, let's discuss the main smartphone consumption sources. You may come up with a list or sources similar to this:

- Display

- CPU

- GPS

- GSM (2G, edge, 3G, 4G, LTE, 5G)

- Wi-Fi

- Bluetooth

- System

It may surprise you to learn which of those sources have the most impact on a battery. Displays are large nowadays and they consume a lot of energy. What about the rest? Which is more draining: GPS, Wi-Fi, GSM or Bluetooth? How should we prioritize consumption issues from this list? It can be difficult to tell how much energy each hardware part consumes.

Of course, measures depend on a particular device, usage type, and apps being used. But there are common trends that could help us while developing mobile applications. Let's take a look at every part of the smartphone and analyze its energy consumption. This will help us draw some conclusions on how to develop more battery efficient apps.

Sources behind the numbers

Part of the latter numbers are taken from Google Talks, some publications, and my own experiments, but the foundation of the understanding is based on the scientific research of Aaron Carroll, a PhD candidate at that time, and Gernot Heiser, a co-founder of Secure Elements and his professor from UNSW Sydney, Australia.

In the research "Understanding and Reducing Smartphone Energy Consumption" they took a Galaxy S3 smartphone, connected some special equipment, and started to measure the power consumption of each hardware part of the smartphone.

The chosen model of the smartphone was determined by the fact that the research had been conducted comparatively long ago, and that it was quite difficult to find any documentation and circuit schemes for commercial devices. They even had to detect hardware modules on the board in different tricky ways.

The researchers worked hard to avoid any external interference and conducted many experiments in order to avoid bias.

Research suppliers glossary:

- Core – cores of the central processor unit (ARM Cortex-A9 quad-core, 1.4 GHz)

- RAM – memory (1 GiB LP-DDR2)

- GPU – graphic processing unit (ARM Mali-400 MP)

- SoC – rest of system-on-chip supplies (Samsung Exynos 4412)

- MiF – memory interface

- INT – internal

- Cell – GSM radio

- Display – Super AMOLED, 4.8”, 720 × 1280

Suspended and idle states

Let's take a look at the amount of energy a smartphone consumes when suspended (screen is off) and different modes are on/off.

In Airplane mode, it consumes approximately 15 mW. With a 3G radio turned on and without any data transmission, consumption increases to approximately 25 mW. And Wi-Fi in the same state increases consumption to approximately 35 mW.

The fact that an idle Wi-Fi radio consumes more power than an idle 3G was really surprising to me.

If we turn on airplane mode, then turn on the screen, power consumption increases dramatically, over 800 mW. No actions from the user, no apps explicitly launched.

By the way, these numbers correspond with smartphone specifications, where it’s said that it’ll work around 10 hours in the idle state:

0.805 / 3.8 = 0.2118 amps (Watts / Volts = Amps)

2100 / 211.8 ≈ 10 hours of work

Where:

3.8 V = Galaxy S3 battery voltage from the specification

2100 mAH = Galaxy S3 battery capacity from the specification

These numbers should help you understand what all these milli Watts mean in terms of physical meaning and real life usage.

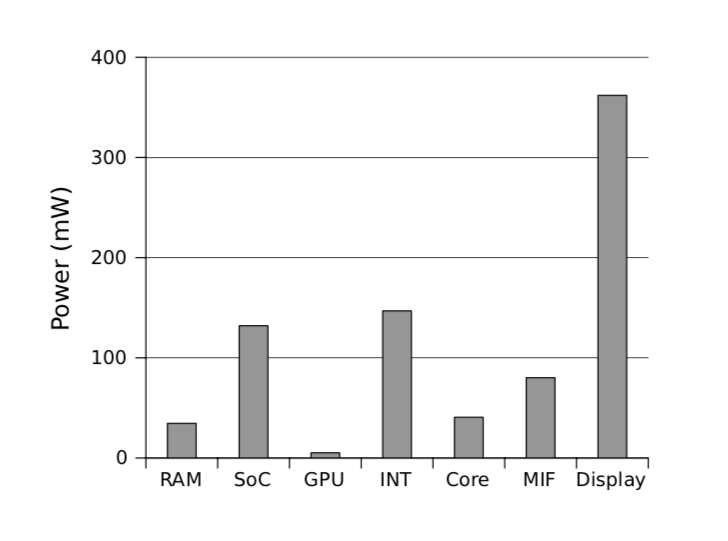

Games

I bet you noticed that games are extremely power demanding. Some games are comparatively simple, but some 3D games are GPU-intensive and, as a result, incredibly battery draining.

With Angry Birds, consumption is significantly less than Need for Speed (1516 and 2425 mW respectively). The GPU in the latter case is particularly power-hungry, using 767 mW (32 % of total).

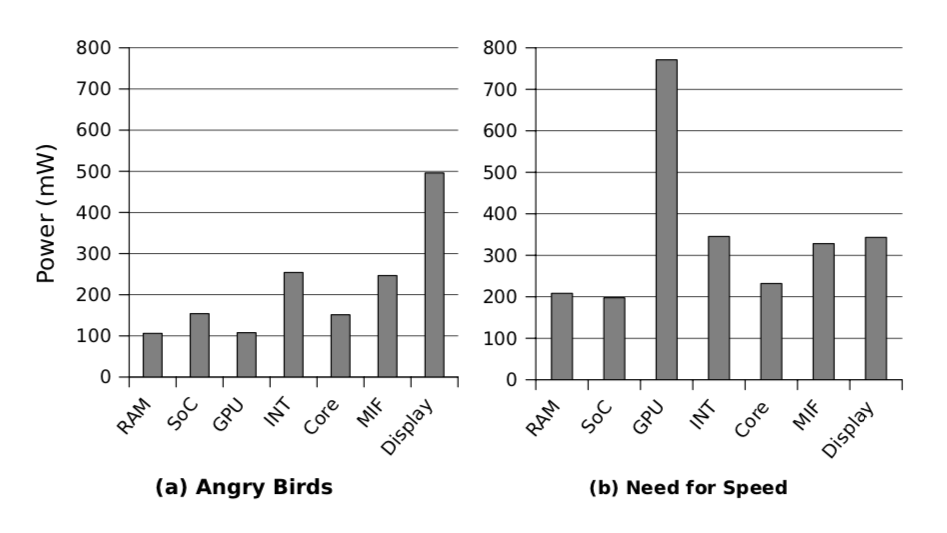

Video

Video playback of two 720p H.264 videos (one at low and one at high quality/bitrate: 1013 kbps / 9084 kbps respectively) clearly shows that software decoding should be avoided at all costs. If you are developing video chats, players, or anything else with video playback, pay attention to the codec you use and what happens with different devices during decoding.

High quality video: 1270 mW for hardware decoding and 2329 mW for software.

Low quality video: 1084 mw for hardware decoding and 1571 mW for software.

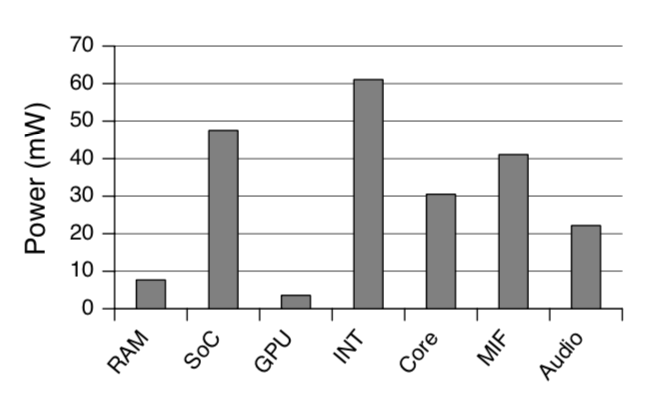

Audio

Audio playback through headphones with minimum volume and a disabled display consumes 226 mW in total, which is quite a good result thanks to special low-power cores/decoders.

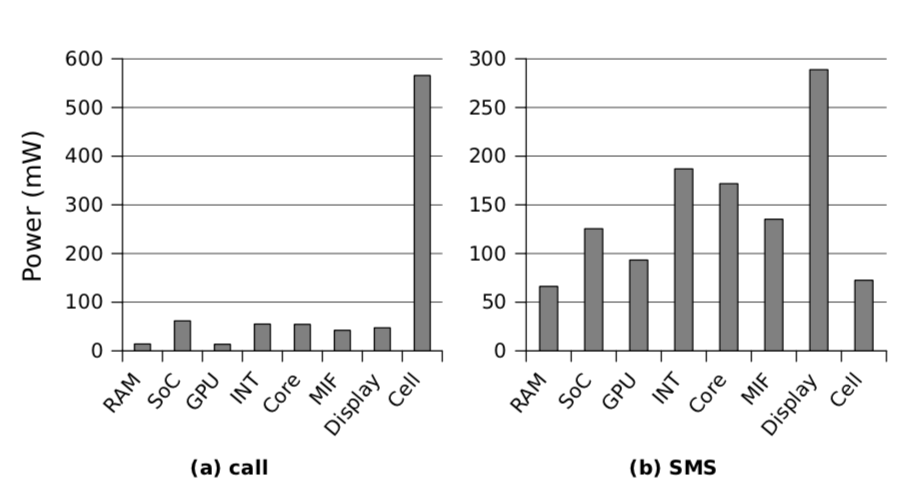

Calls and text messages

A phone call consists of opening the dialer, 10 second ring time, 40 seconds of talk time, then returning to the home screen. For SMS, we include 45 seconds to load the messaging application and type a short message, plus additional time to transmit the message via the 3G cellular network.

The call took 865 mW in total. SMS messages consume around 1140 mW, mostly because of the display.

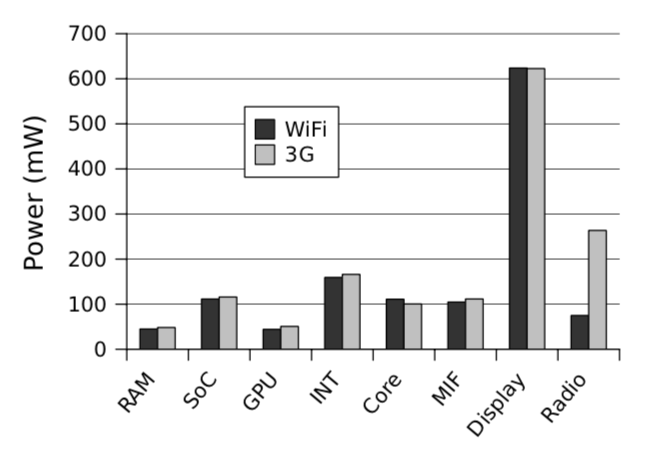

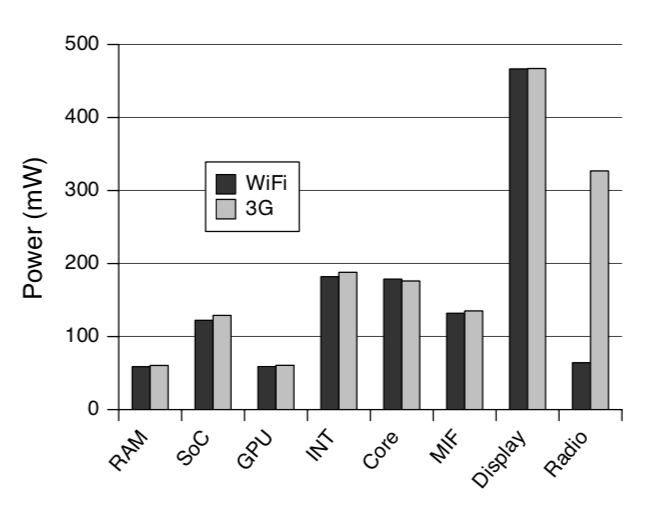

Web browsing and email

This example of a web browsing workload consists of loading the BBC news mobile website, browsing the front page headlines, and reading three articles, for a total of 180 seconds. For the email scenario, a built-in email application was used to fetch and read five emails, one of which contains a 60 KiB image, and replying to two messages.

Web browsing (the first image) over Wi-Fi consumed 1257 mW and over 3G 1479 mW. These scenarios are actually the most typical for users and here you’ll notice a very significant insight.

GSM radio consumes much more power than Wi-Fi radio during data transmission, so it is recommended to postpone large data transfers until the phone is connected to Wi-Fi or, at least, to double-check with the user if they have agreed to use GSM radio or want to schedule a task for later.

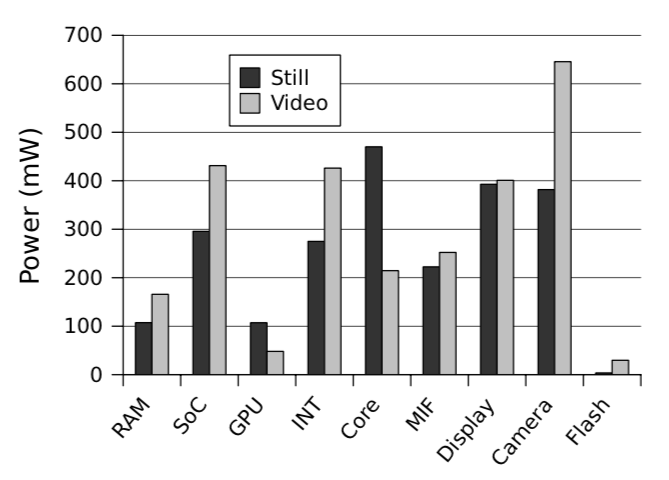

Camera

Let’s observe two scenarios: the still image scenario consists of loading the camera application, focusing on the near-ground object, taking the photo (no flash), and finally viewing the image. The video capture scenario consists of loading the camera application and taking a 20 second video.

As you can see, modern smartphone cameras are a complex mix of software and hardware, and they consume a lot of power. The photo scenario consumed 2,256 mW, and the video scenario took 2,614 mW.

Network

One more important observation is that GSM-radio (3G) uploading is almost twice as expensive as downloading, according to the measurements with the Speedtest.net Android application:

- Upload 1137 ± 372 mW

- Download 768 ± 64 mW

Since it is difficult to control for environmental factors, such as network operator load or signal strength, measurements were conducted 16 times spread over a 24 hour period.

There are different GSM standards supported by operators worldwide: 2G, 3G, 4G, LTE, and 5G. As a general rule, each successive standard consumes a bit more power than the previous one.

By the way, the term LTE is more of a marketing term than a technical one, and was created to disguise some negative real-life experiences. Imagine you are a GSM operator with 3G equipment installed in your area. After some time, the new 4G standard occurs and you want to support it. You won’t be able to replace all the equipment at once, so you’ll change it gradually from one location to another. Once all of the equipment has been upgraded or replaced, you can enjoy all of the new capabilities of the new standard. This process is called LTE – Long Term Evolution.

And speaking of 5G. It’s almost two times faster than 4G, and it’s up to 1Gbit/s with a theoretical peak around 20 Gbit/s.

Sensors

Consumption was measured for most of the device’s environmental sensors, including:

- Accelerometer 5 ± 2.3 mW

- Gyroscope 30 ± 1.3 mW

- Light meter 3 ± 1.7 mW

- Magnetometer 12 ± 0.6 mW

- Barometer 1 ± 0.7 mW

- Proximity sensor 7 ± 2.2 mW

No surprises here; each of the mentioned items consumes a bit of power, and there is no big risk in using them extensively.

GPS

GPS modules consume a significant amount of power:

- Acquisition 386 ± 19.5 mW

- Tracking 433 ± 21.5 mW

Thankfully, modern smartphones have proprietary services, such as Google Play Services, which have special APIs integrated into the system to work with the GPS module. These APIs simplify usage and reduce consumption. For example, Fused Location Provider API in Android consumes power more efficiently by sharing location data between apps and using not only GPS, but also Wi-Fi and sensors for navigation.

Bluetooth

Unfortunately, the authors were unable to measure Bluetooth consumption due to technical reasons. However, they were able to measure overall consumption with and without Bluetooth while the display was turned off and audio was transferring. Here are the results:

- Audio Baseline consumption: total 460 mW, Bluetooth 0 mW

- Bluetooth near: total 496 mW, Bluetooth 36 mW

- Bluetooth far: total 505 mW, Bluetooth 45 mW

I can only comment that Galaxy S3 used in the research has a Bluetooth 4.0 module. But thankfully the standard has improved since then and now Bluetooth 5 is considered as a low-power consuming part of the phone. Data transmission speed was improved too:

- Bluetooth 4 LE: ≈ 2 Mbps ≈ 250 kB/s

- Bluetooth 5 LE: ≈ 5 Mbps ≈ 625 kB/s

Max power

Also, it’s worth mentioning the maximum power consumption that was fixed during different measurements:

- Core 2845 mW, AnTuTu Benchmark

- RAM 208 mW, Need for Speed

- GPU 1415 mW, AnTuTu 3DRating

- 3G 1137 mW, Speedtest.net (upload)

- Display 1124 mW, full brightness white screen

Summary

We looked at different scenarios for using a smartphone with a focus on energy consumption. For example, we found out how much phones consume in standby mode and how the network affects battery consumption depending on the type of connection, etc. This research helped us understand the baseline and relative figures that will allow us to make more informed decisions regarding battery saving. Now let's take a closer look at displays.

Displays

There are lots of different myths around displays, usually without any reliable proof. For example

- Dark theme helps reduce battery consumption

- Dark theme improves UX

- Big resolution is better

- Higher refresh rate is better (60/90/120 Hz)

Let’s discuss displays to eliminate all the misunderstandings.

There are two main display types used nowadays: LCD (IPS) and OLED (especially AMOLED).

LCDs (Liquid Crystal Display) work by modulating properties of liquid crystals. They require a backlight in order to illuminate the crystals, so basically it is a display with a large lamp behind it.

OLEDs (Organic Light-Emitting Diodes) work differently; each pixel is made up of LEDs, which emit light. This display type has several advantages over other technologies:

- No need for a backlight

- True black color (no backlight at all)

- Can have different subpixel arrangements

As a result, the majority of mobile devices have been moving to OLED in recent years.

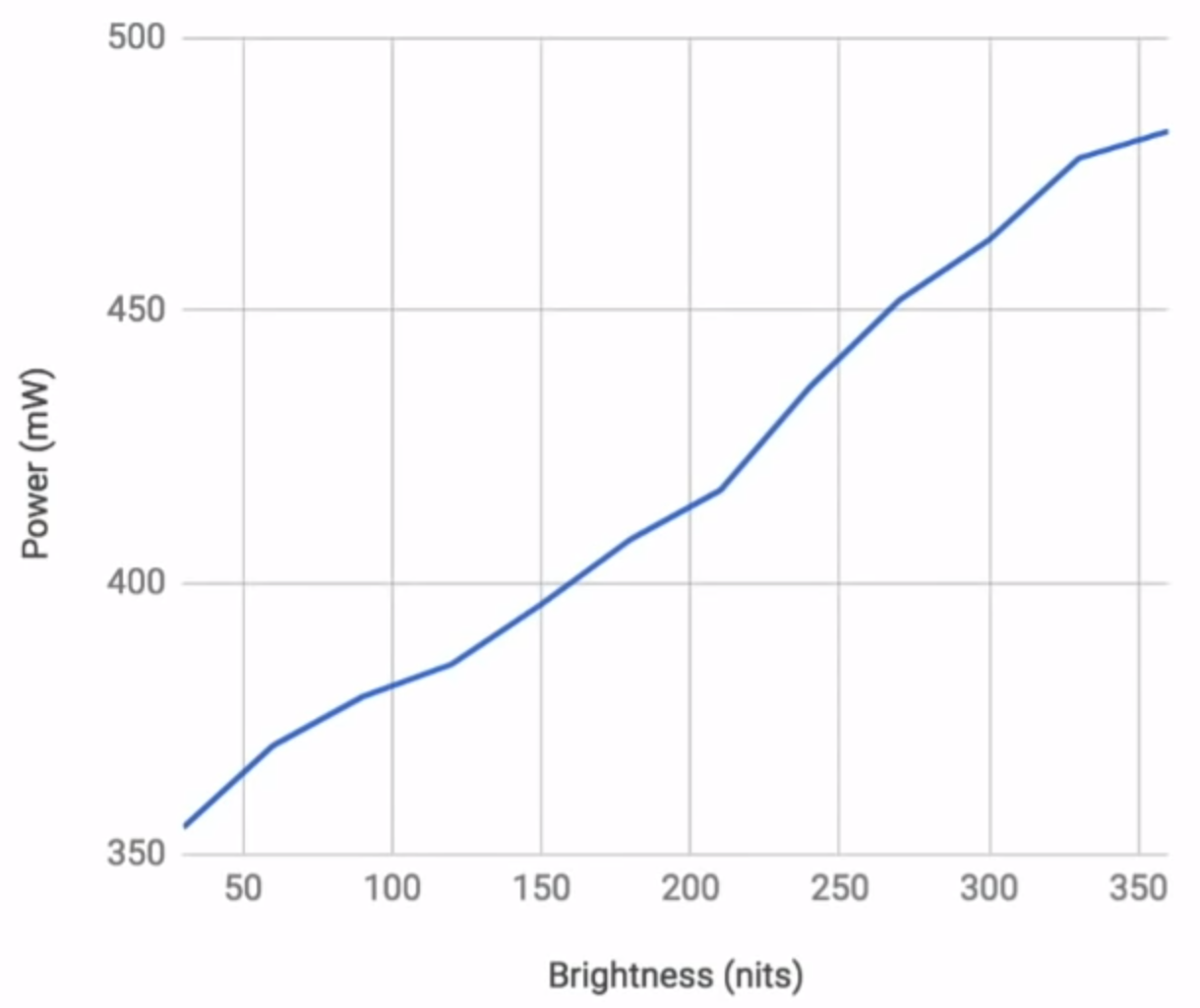

Power consumption

Displays consume a lot, not only because they are so large nowadays, but also because they need power for a backlight or to light up diodes. Therefore, consumption varies depending on the level of brightness. Let's take a look at the graph of the Google Pixel phone measured by Google and published at a Google I/O event.

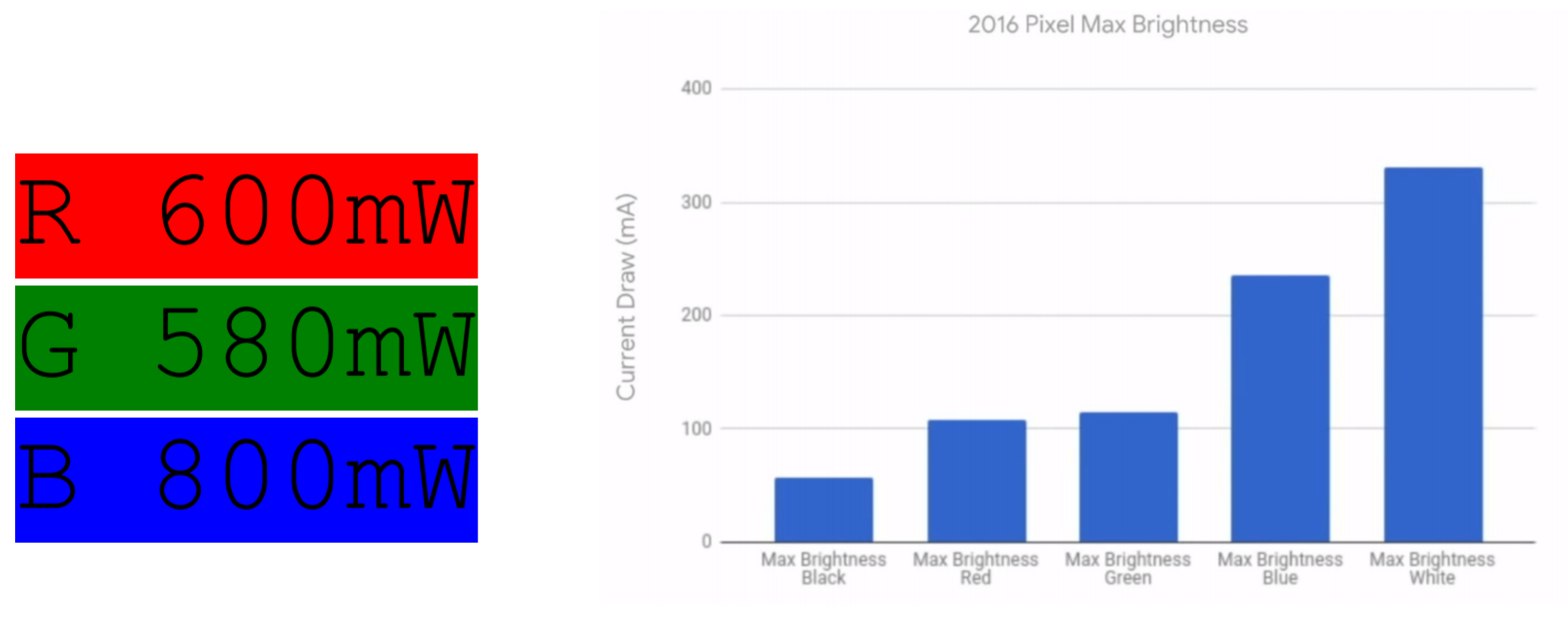

An interesting fact you may not have known is that even different colors cost differently in terms of battery consumption. For example, blue is much more expensive than green. Let’s take a look at Google's graphic with different colors and consumed electric current in milliamperes.

Knowing the type of Google Pixel display (AMOLED) and recent facts about how these displays work, it is easy to guess that black will be the most inexpensive color to draw or white will be the opposite.

Dark mode

Lots of buzz surrounded battery saving with dark mode some time ago. Is it true? Does only true black (#000000) count, or do any dark colors work?

I bet you already guessed based on previous facts, but let's answer this question formally.

Google Labs took two smartphones, a Google Pixel with an AMOLED display and an iPhone 7 with an LCD display, and opened a screenshot of the Google Maps app in both normal and dark mode on each device. They then measured the battery drain and here are the results:

iPhone 7

Max brightness normal mode: 230 mA

Max brightness night mode: 230 mA

Pixel

Max brightness normal mode: 250 mA

Max brightness night mode: 92 mA

As you can see, the dark mode really affects the power consumption, but only for OLED displays. It won't work the same way on LCD (IPS) screens. The difference is huge: 63% less than light theme mode. Wow!

You may wonder why anyone is still using light themes for their apps or why light colors are not prohibited in smartphones. The answer is simple – the difference is so dramatic only at maximum brightness. With less brightness, you get more modest numbers, but there is still a chance to lower power consumption by around 5-10%.

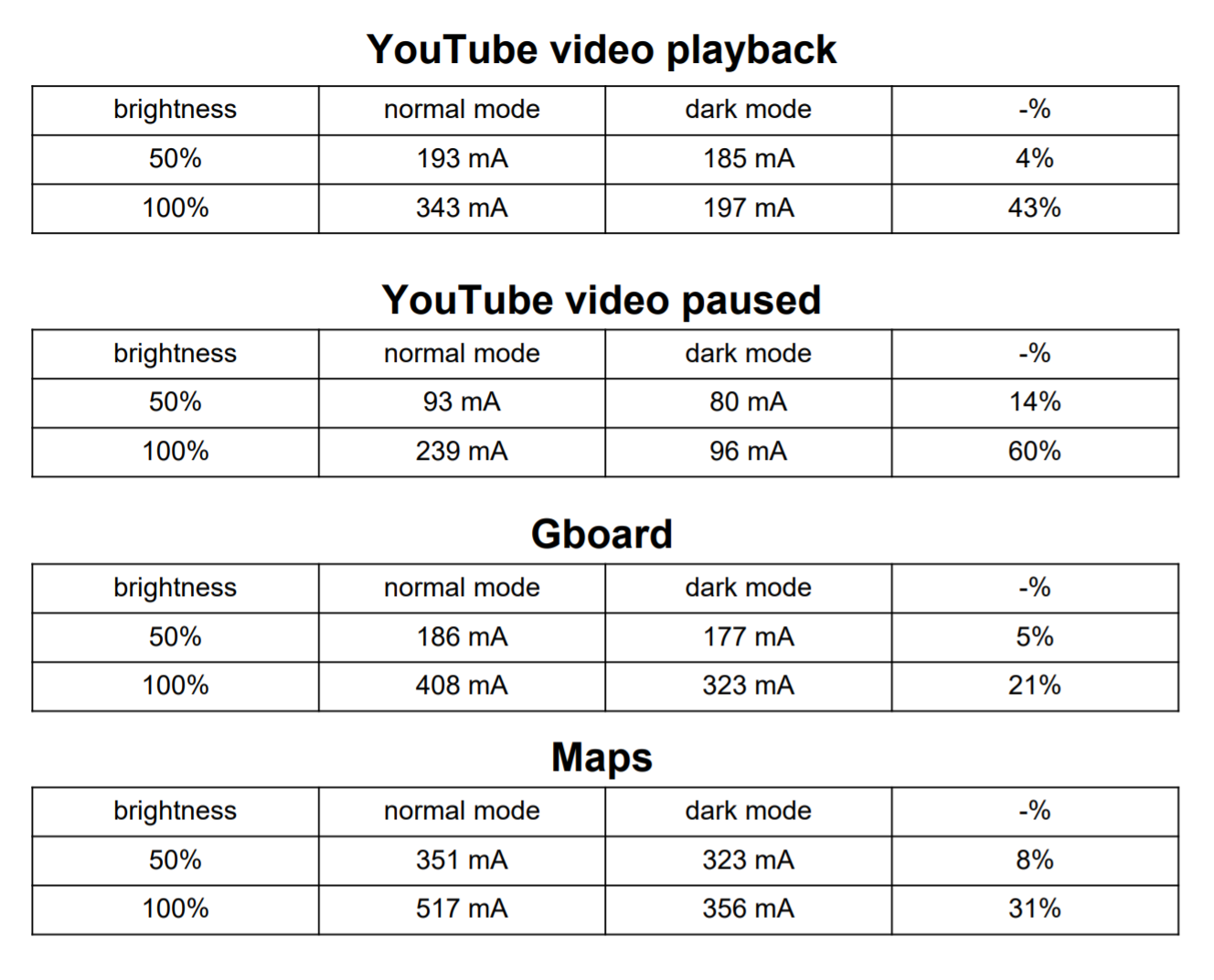

Take a look at the measurements of OLED displays for different apps and cases.

If you, as an app developer, prefer a dark mode as a default, you could save some battery for your users.

Let me also leave a few developer comments and facts.

Today, you can easily implement mode switching in your app. For example, in Android, you can extend styles from the Theme.AppCompat.DayNight or Theme.MaterialComponents.DayNight themes, and then use colors with the appropriate qualifiers.

Interesting fact: -night qualifiers were introduced in API version 8 (Android 2.2 Froyo) in 2010, quite a long time ago. However, dark themes didn't become popular until shortly before the Android 10 release in 2019 as there were no proper guidelines, OSs didn’t support system themes and there were no APIs to detect it. However, a new special API was added to the mentioned Android 10 to check the current user's theme preferences.

It could be reasonable to determine the default theme based on the display type and set a dark theme for OLED. But there are no APIs to check the type of the display.

If you’d like to implement a runtime redraw – a process when you smoothly change an app's theme or just some colors without restarting the app or activity itself – it will take some effort, at least on Android. You will have to do some extra steps and overrides to achieve it, but it is possible.

Oh, I almost forgot about the "Dark vs Light mode" debate in terms of UX. Which is better? If you're interested, take a look at this article which combines results from several different researches and experiments. To cut a long story short, a light mode is slightly more performant for the human eye. Also, both modes could be reasonable for people with different medical conditions. Besides that, some people just like and prefer dark mode.

Overall I consider dark themes to be a good thing in your app if you want a smooth experience for your users.

Resolution

Manufacturers like to use screaming marketing titles, such as "best ever super-AMOLED display". However, the reality is that these displays usually have more humble defaults and don't make bold claims with a market median FHD+ resolution.

For example, I owned a Galaxy S9 several years ago. It was able to run QHD+ 2960x1440 resolution, but the default out of the box was FHD+ with 2220x1080. Or let's take the Galaxy S21 Ultra with a default FHD+ 2400x1080 resolution, but highlighted in advertisements as WQHD+ 3200x1440.

The reason for this manufacturer's trick is quite simple: It is easier to sell with higher numbers. But higher resolution requires more processing resources to render more data, which ultimately leads to faster battery drain. Users definitely won’t be happy with more frequent battery charges. And taking into account the fact that most people do not even notice the difference between FHD+ and WQHD+ on smartphone screen size, it’s easy to come up with a conclusion why the defaults are usually lower. Don’t run for the biggest resolution in displays, it’s expensive and usually not needed for most of us.

Refresh rate

A trend in recent years is definitely a higher display refresh rate. Of course, it’s nice to have smooth scrolling and animations; compare it yourself here. But is it worth it?

The tradeoff with 90Hz and above displays is substantially reduced battery life. You basically have to render a lot more frequently than previously with the same hardware.

During the test of the OnePlus 7 Pro I found on the Internet, it was noted to have 200 fewer minutes of browsing time when using the 90Hz mode versus the more standard 60Hz. In some cases, that battery tradeoff is simply not worth it — for instance, with smartphones already suffering from questionable battery life. Try to disable the 90Hz mode to ensure a good day’s worth of use.

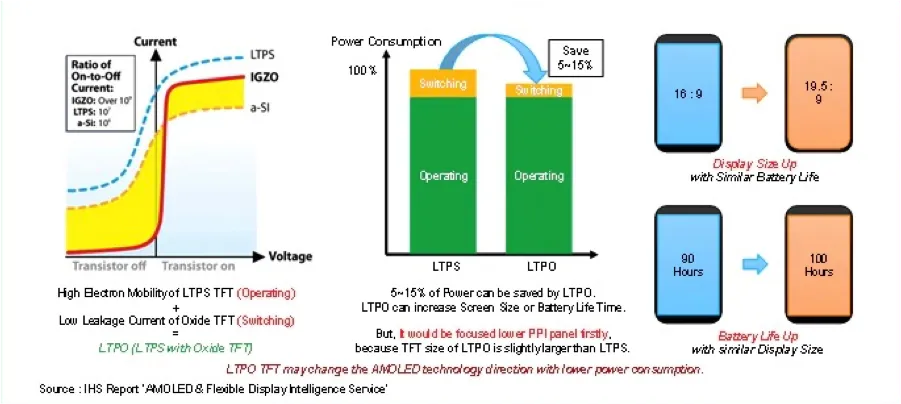

However, the progress is unstoppable. And there is a technology that helps to overcome the mentioned disadvantage. LTPO (Low-Temperature Polycrystalline Silicon) is a changeable refresh rate technology. That’s why you’re probably holding a huge 120Hz display right now and don’t bother yourself a lot with a battery drain. It’s also popular in smartwatches for always-on displays. In simple words it helps slow down the refresh rate when there is no need for frequent changes.

Summary

We reviewed displays in terms of power consumption. We looked at some numbers, delved deeply into dark/light mode issues, so now we should have some arguments on the topic, reviewed questions such as display refresh rate and others. Hopefully this knowledge will help you to understand technology and what happens behind the scenes to make informed decisions about displays in your apps.

Passive optimizations

Now we know quite a lot about the battery life of smartphones. Let's think of steps that could reduce battery usage and increase app performance.

First, let's discuss possible passive optimizations. I call optimizations passive when they don't affect our app's code directly, but involve infrastructure, protocols, network transmission improvements, etc.

Protocol

Most apps use HTTP as the default protocol to request data from a remote server. I bet you know that HTTP has a huge transport overhead compared to other modern protocols.

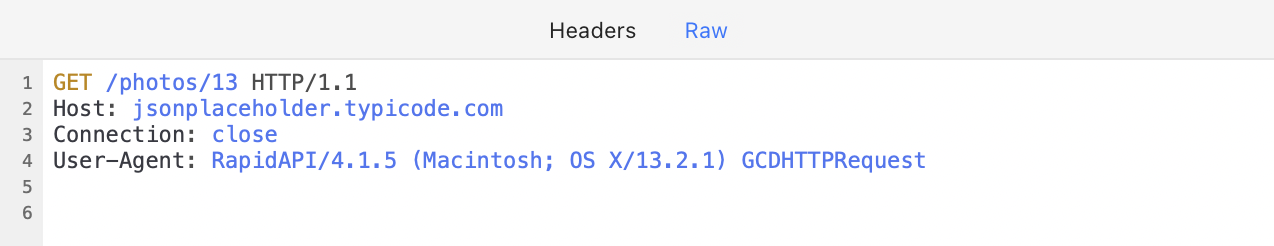

Let's take a look at a typical example of a REST request and response.

It’s not hard to notice that both requests and responses have header overheads. For the response, it is even a couple of times bigger than the response payload itself. Plus, HTTP/1 transfers strings, not binary data which is much more compact.

Consider migrating to HTTP/2 or any other efficient protocol. It will reduce data transfer volume and, as a result, increase speed and decrease battery consumption.

HTTP/2 advantages:

- Binary

- Multiplexed (several data transfers at the same time)

- Uses one TCP connection

- Header compression

- Bi-directional (server push)

Take a look here and here for the details.

In my practice, I once implemented a custom binary TCP/IP protocol for client-server communication. Take a look at the header structure compared to HTTP; it is only 10 bytes and contains all the necessary data.

Custom binary protocol could also be a good option to consider if you have the resources and expertise. But usually, most developers prefer fully-ready solutions so as not to waste lots of time on a custom protocol setup. I would recommend gRPC as a typical solution, which I will mention in the next section.

Bi-directional communication could also improve performance and decrease battery consumption. With server pushes, you can reduce polling requests and be notified when data has been changed.

Data compression and serialization

Now let’s talk about the payload itself. If your app transfers a significant amount of data, serialization and compression algorithms are very important.

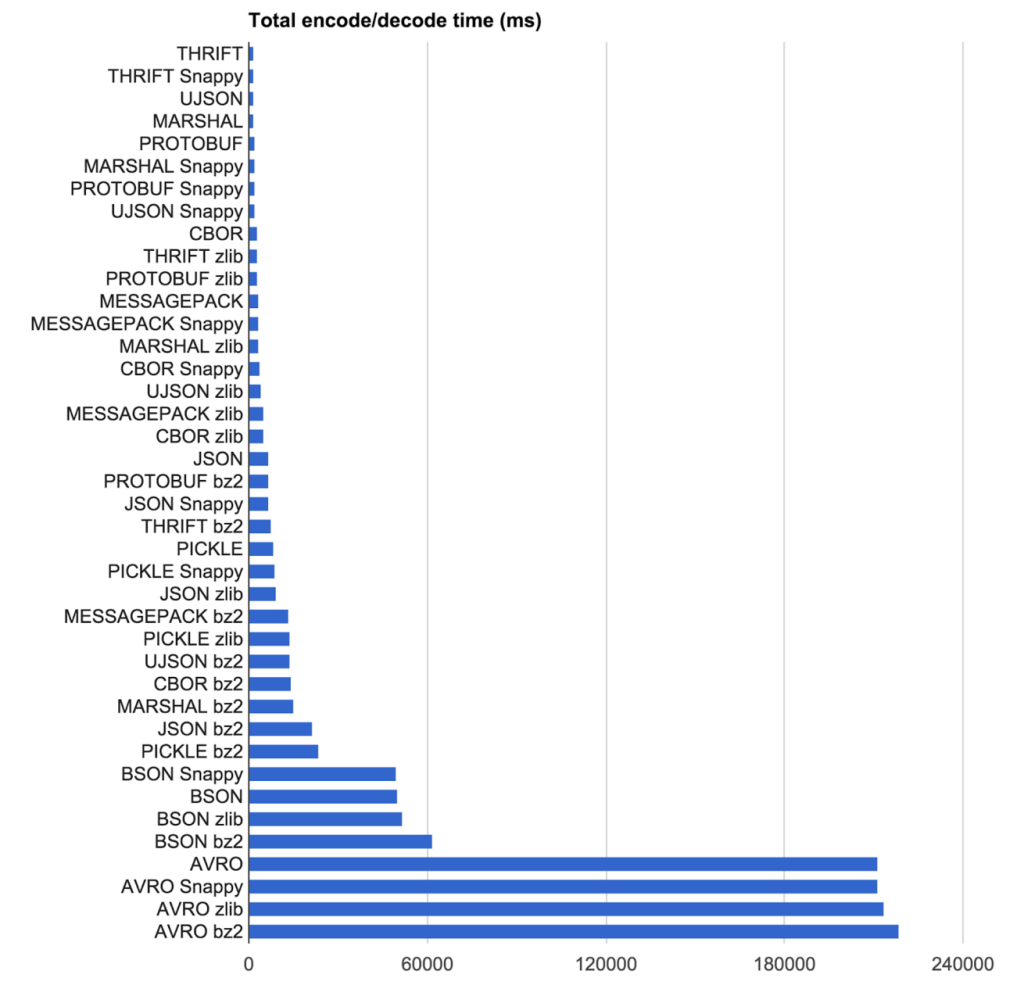

If you still transfer raw JSONs there is a huge opportunity to improve the overall performance. Even if you enabled gzip compression, you still could do much better. Here is a comparison of data serialization and compression algorithms made by Uber when they were choosing their own stack.

I personally prefer schema-based formats for data transfer. My choice for today is Protobuf. It shows one of the best performance results and decreases payload size a lot. Even if you compare it with gzipped json payload. For schemaless data serialization I would prefer CBOR.

A good overall choice for the client-server interconnection could be gRPC. It is a high-performance, language-agnostic RPC framework based on the Protobuf data format.

It is worth mentioning compression algorithms; in one of my previous projects, we measured our data flow and chose LZ4 instead of the typical ZLIB. This algorithm works faster and is less CPU-intensive, but it is not as storage efficient as ZLIB. For a project I worked on previously, we preferred LZ4 because it was a high-load project and we had a lot of users and servers to maintain. It made sense to reduce the CPU load in order to accommodate more users per server. More details about LZ4 can be found here.

So, the main advice here is to try compressing and serializing your typical data and then make a decision based on what suits you better. For example, if your data can’t be schema-based or is quite large and you need faster access to some parts, then it is a call for FlatBuffers or something similar. Therefore, try what fits you better.

Media formats

If your app uses media extensively, you should definitely consider upgrading media formats. Many services still use JPG and PNG as a main image format.

You could significantly decrease network traffic if you migrate to WebP. WebP is an image file format that was developed by Google in 2010. It is designed to be a more efficient alternative to traditional image file formats like JPEG and PNG, with the goal of reducing image file sizes without sacrificing image quality.

WebP uses both lossy and lossless compression techniques to achieve smaller file sizes. In lossy compression, some data is discarded to reduce the file size, while in lossless compression, no data is lost. WebP supports both types of compression, which makes it a versatile image format.

- WebP lossless images are 26% smaller in size compared to PNGs

- WebP lossy images are 25-34% smaller than comparable JPEG images at equivalent SSIM quality index

- Supports transparency (alpha channel) at a cost of just 22% additional bytes

The same applies to video content. There are different formats that could be applied instead of traditional ones to decrease traffic and as a result to increase performance and reduce impact on battery. For example, WebM. Web Media File (WebM) is an open-source video file format also developed by Google. It has the necessary features to work with HTML and does not require extensive system resources for compressing and decompressing video data.

WebM is also universally compatible with MP4. According to an experiment conducted by Darvideo.tv, MP4 and WebM are at par with quality. However, WebM reduces file sizes by five times without compromising quality.

Both mentioned formats are open and optimized for a low computational footprint, so they should serve well for low-power smartphones, notebooks, etc. Additionally, both formats are supported by browsers and smartphone operating systems. And don't stop with these – feel free to find other formats that suit your case better.

Network optimizations

Connections reuse

Each connection takes time to establish, so be reasonable, and make sure that you reuse connections to the same servers.

I witnessed cases where different parts of an app used different HTTP clients and were not sharing connections. Or when after a successful data transmission on Screen A, the network client was released and the connection closed. A moment later, Screen B needed to create a new client to send requests to the same server.

When services are located on different hosts in the same private network, such as service1.my-server.com and service2.my-server.com, consider creating a reverse proxy server to improve connection reuse and overall performance. Such a reverse proxy could also play the role of a TLS termination server to further improve performance.

Use HTTP/2 instead of older versions to reuse connections for different logical streams in parallel. For example, in the JVM stack Netty and Jetty support HTTP/2 and hence, all the frameworks based on them too. Just find an appropriate HTTP/2 web server for your stack and try it.

Session IDs / session tickets

A well-known fact is that TLS connections take more time and require more CPU resources than the insecure approach. TLS affects connection establishment time, and depending on your server resources and the number of users, it could slow down the process even further.

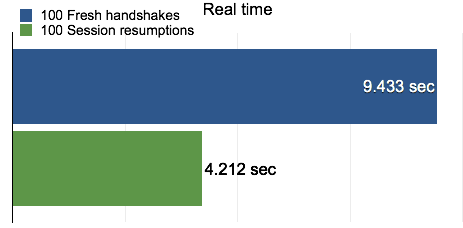

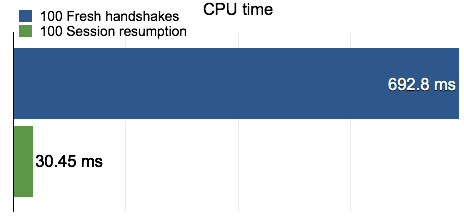

Assuming your app users are visiting your app regularly, you could use a session resumption mechanism to improve the process. There are two main standards here: Session IDs and Session Tickets. The first one means that the server caches TLS session information, and when a client reconnects to the server with a session ID, the server can quickly look up the session keys and resume encrypted communication. However, this could cause scalability issues as servers are responsible for remembering negotiated TLS sessions. This is what Session Tickets can help us with by outsourcing storage of session information to clients.

Cloudflare measured that the overall cost of a session resumption is less than 50% of a full TLS handshake, mainly because session resumption only costs one round-trip while a full TLS handshake requires two. Furthermore, a session resumption does not require any large finite field arithmetic (new sessions do), so the CPU cost for the client is almost negligible in comparison to that in a full TLS handshake. For mobile users, the performance improvement through session resumption means a much more responsive and battery-life-friendly experience.

Summary

You could improve your app's battery consumption a lot without having to make global changes to the mobile app itself. There are lots of opportunities here such as an efficient protocol, bi-directional communication, compact serialization, efficient compression, improved connection setup, and modern media formats.

Active optimizations

When developing mobile applications, you have to follow different routines than those for frontend or backend development. The main factors are:

- Mobile hardware usually is less performant than desktop/laptop hardware. While there have been significant improvements in the industry in recent years, the growing number of low budget devices brings down the relative impact of improvements in hardware of high budget devices

- Mobile networks are less reliable than broadband networks

- Usage pattern is different compared to desktop

- Different app lifecycle. A mobile operating system could evict apps from memory or limit their background activities

- Interfaces are different: touch in mobile vs pointers in desktop

Now let's review active optimization techniques that mobile developers can use to significantly improve app performance and decrease battery drain.

Strategy

When somebody asks me how to make a robust mobile app in terms of battery consumption, I usually answer “Lazy First”.

Making your app Lazy First means looking for ways to reduce and optimize operations that are particularly battery-intensive. The core questions underpinning Lazy First design are:

Reduce: Are there redundant operations your app can cut out? For example, can it cache downloaded data instead of repeatedly waking up the radio to re-download the data?

Defer: Does an app need to perform an action right away? For example, can it wait until the device is charging before it backs data up to the cloud?

Coalesce: Can work be batched, instead of putting the device into an active state many times? For example, is it really necessary for several dozen apps to each turn on the radio at separate times to send their messages? Can the messages instead be transmitted during a single awakening of the radio?

You should ask these questions when it comes to using the CPU, the radio, and the screen. Lazy First design is often a good way to tame these battery killers.

Previously it was a bit harder to implement this strategy properly, but now there are different System APIs, like a JobScheduler or a WorkManager library in Android, to simplify the process. With their help you can schedule any job with declarative constraints:

- Particular network type (connected, metered, not roaming, etc)

- BatteryNotLow

- RequiresCharging

- DeviceIdle

- StorageNotLow

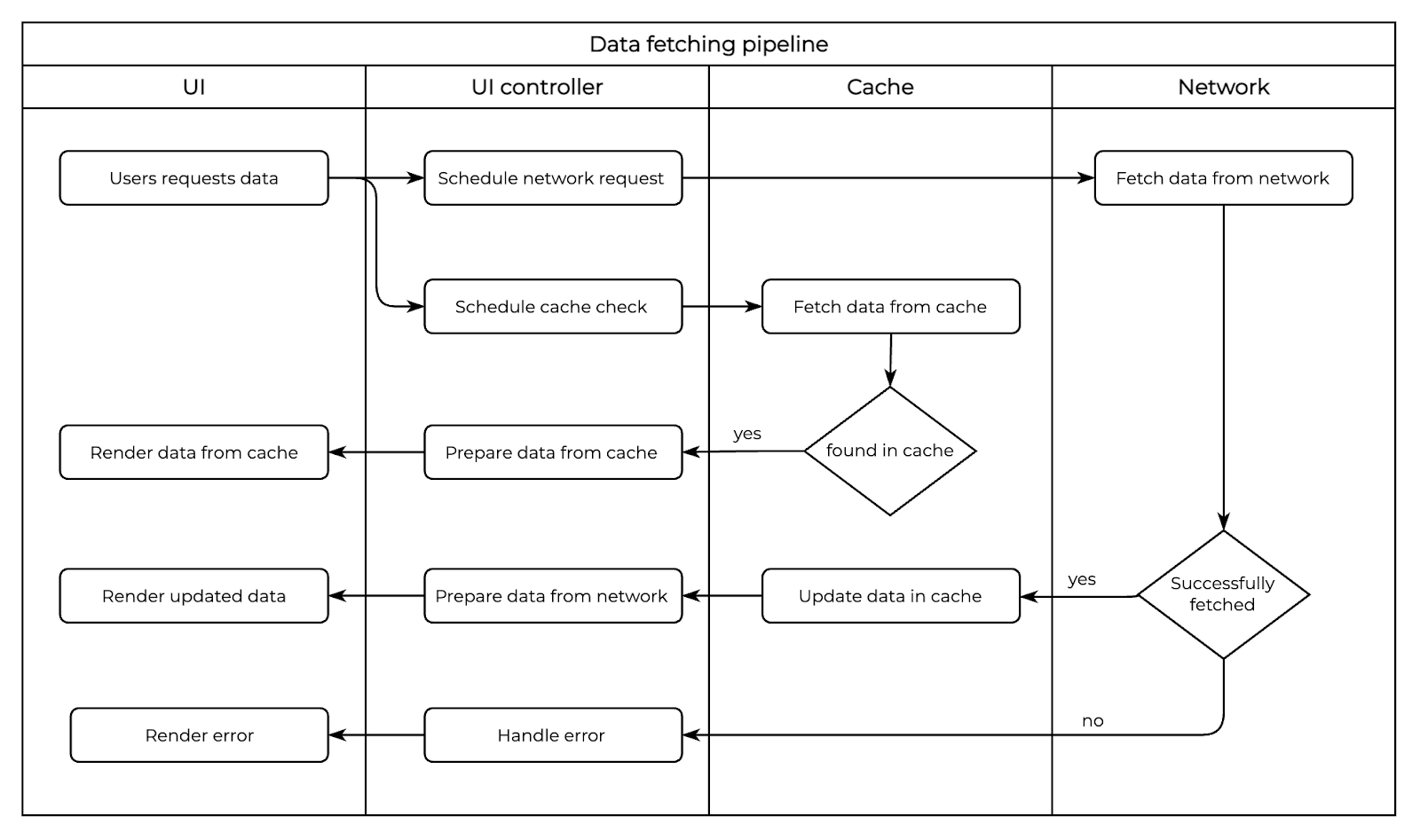

Caching

Long ago, when I was just starting my journey in mobile development, I came across a video from one of the first Google I/O events, I believe it was 2008, where an engineer kept repeating a mantra of "persist often, persist early".

Taking into account the previously mentioned factors, the best strategy to appear as a fast and reliable application is to cache as much as possible and as often as possible.

If you receive a resource from your server, store it immediately, even before you show it to the user or in parallel. The next time the user opens your app, you can show this data almost instantly while long-term synchronization with your server is occurring on a weak coverage network.

Popular image libraries usually have built-in caching functionality, but sometimes we ignore files and data entities received from the backend. This data should also be stored in a file or database.

Sometimes it looks like an overhead to cache everything, but if you set up a proper data propagation pipeline, you won't even notice it:

This will help to look fast and reliable even when the network isn’t the best.

Changes only

Smartphone resources are limited: less power, slower network, and smaller battery. Don't fetch anything if there have been no changes since the last update.

Try to organize data interchange with only new changes. For example:

- Fetch a list of, let's say, clients

- Remember the maximum last update time of each entity in the response

- When the app or screen is started again, load previous data from the cache

- Request new data passing the maximum last update time from the previous response

- Let your server return only new or updated entities since the last request

- Update UI with new entities

This approach will decrease overall traffic for your service and not only save battery resources, but also help your app look very performant.

Batching

First of all, I want to emphasize that a wireless GSM chip is different from a typical wired network adapter. We as developers tend to think of Internet access as being like a faucet with two states: on and off. In reality, wireless radios tend to have four states:

- Off mode

- Standby mode

- Low power mode

- Full power mode

Not surprisingly, the power draw for full power is substantially more than low power, which in turn is more than standby.

There is some latency to move from standby or low power to full power. This slows down data transfer while the radio “warms up”.

The idle time needed to transition to a lower power state is substantial, with values in the 5-15 second range well within reason. This means that making a request has a lingering power cost even after our request has completed.

It takes time and power to warm up from standby mode to full power mode. That is the main reason behind batching work, and that is why operating systems try to synchronize transmission wake-ups across apps (we will discuss it in more detail later).

Batching is important not only for your app, but also for the hosting system. If apps are constantly waking up the CPU and network, it is no surprise that the battery will be drained. However, if all of the apps on the phone have special maintenance windows to make their batched requests, it could save a lot of resources.

Network requests batching experiment

Some time ago, I decided to conduct an experiment to see how request batching affects energy consumption.

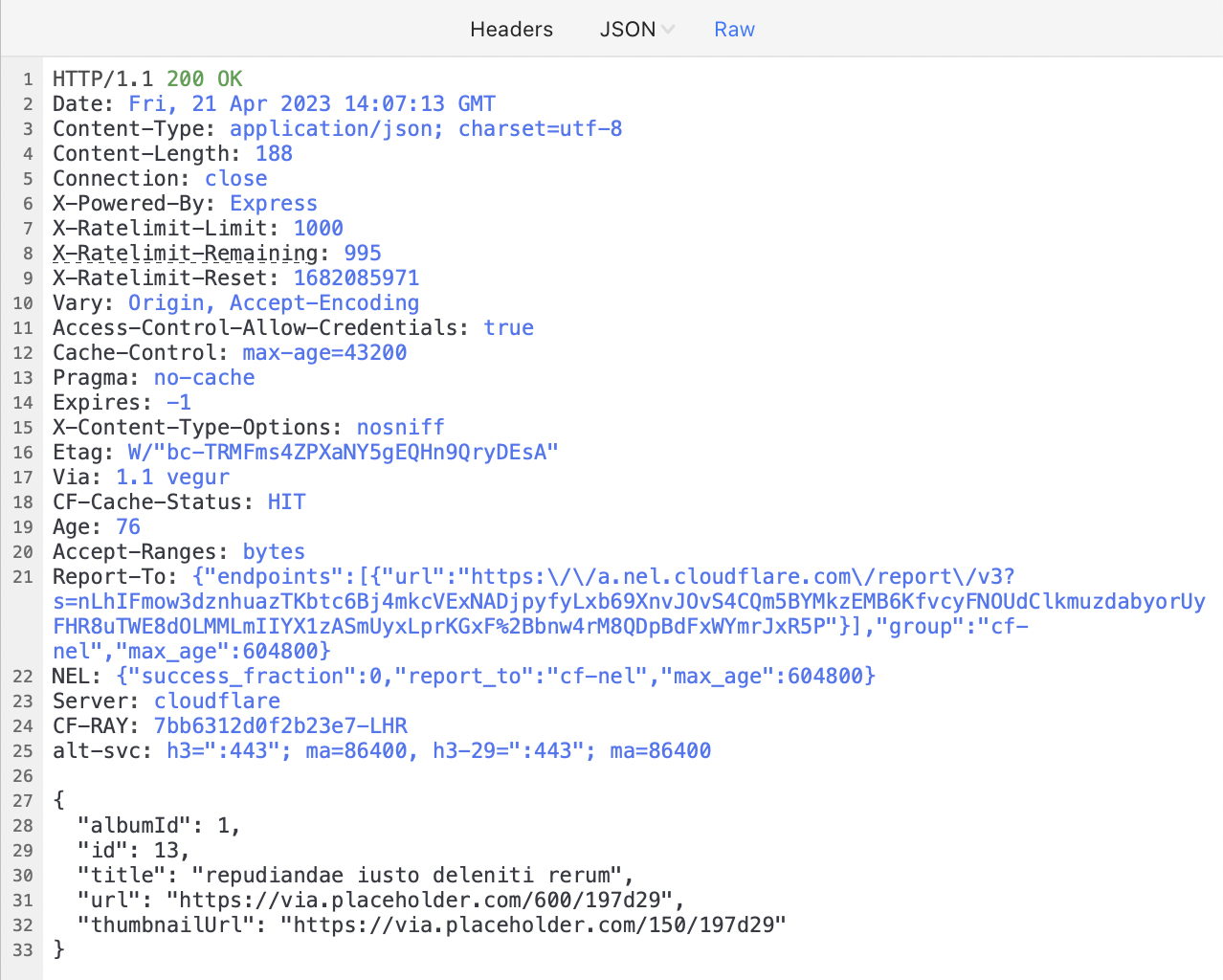

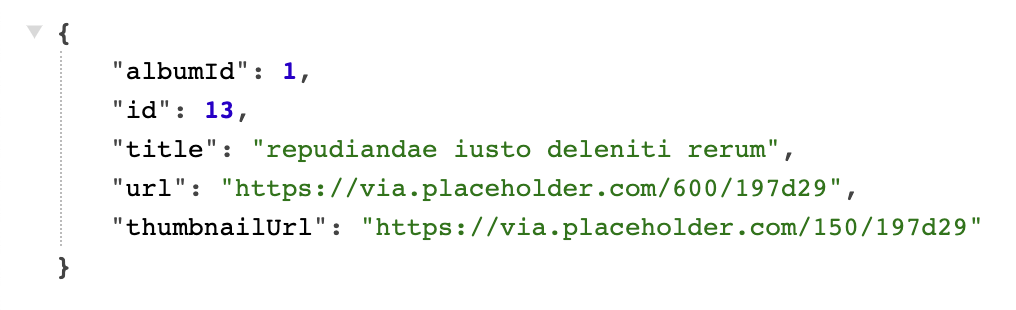

The idea was to write a test app that makes regular HTTP requests to a sample REST API. The number of requests for each step of the experiment was 1400.

Request count: 1400

API calls to: https://jsonplaceholder.typicode.com/photos/13

Response sample:

Experiment steps looked like this:

- 1 request every 10 seconds

- 3 requests each 30 seconds

- 6 requests each 1 minute

- 12 requests each 2 minutes

- 24 requests each 4 minutes

- 48 requests each 8 minutes

- 96 requests each 16 minutes

- 192 requests each 32 minutes

- 384 requests each 64 minutes

The screen was turned off during the experiment and no other apps were running. Furthermore, most of the apps were uninstalled to avoid any interference.

Wi-Fi was off and the GSM-radio was on with a typical 3G/4G connection. All steps were conducted in the same location to avoid any network impact.

After all 1400 requests had been completed, I measured the impact of the application on the battery as a percentage from OS battery usage stats in system settings.

I took my two old smartphones, a Nexus 5 and a Google Pixel, and conducted the described scenarios. Here are the results:

As you can see, when we make frequent requests, our app consumes tens of percent of the battery. For example, if we make 3 requests every 30 seconds with a Nexus 5 smartphone, we will drain 13% of the battery for 1400 small requests.

However, if we batch our requests and do the same amount of work in batches of 384 requests per hour, we will decrease battery consumption by almost tenfold. This is a very spectacular visualization of the importance of batching.

You can notice a couple of asterisks (*) in the Google Pixel results. I left these intentionally to show an interesting fact: mobile networks are quite fragile in terms of signal strength. One asterisk indicates that during the measuring a large sporting event was held in the city, causing the network to be less reliable and requiring more power to do the job. The second asterisk shows that the step required more power than expected because measurements were conducted during a heavy rain. Thus, weather and other conditions can significantly affect radio signal and power consumption.

Also worth mentioning is the difference between the results for Google Pixel and Nexus 5. The numbers are higher for the Pixel, which could be explained by the fact that Nexus had Android 6 (M), but Pixel's version was Android 8 (O) which introduced lots of battery improvements. These improvements decreased interference from other apps, which is why the numbers are higher. This basically proves that all of the improvements made by the Android team were justified.

Disk batching

You may be surprised, but batching could be helpful not only with network requests, but with disk I/O too. You can notice this in something like SQLite, where wrapping a bunch of INSERT statements into a single transaction can have substantial benefits in terms of how long the I/O takes.

According to Mark Murphy, the author of “The Busy Coder's Guide to Android Development” and other popular Android books:

- Writing 1 GB with 1 KB buffer 2x more expensive as is writing it 10 MB buffer

- Writing 1 GB with 100 B buffer 5x more expensive as with 1 KB buffer

Hence, you want to try to batch up your disk I/O, where possible, to do fewer, bigger operations, rather than lots of little ones. This includes:

- Batching database I/O in a transaction, as noted above

- Caching data that you intend to log to disk in memory and only writing when your in-memory buffer reaches a certain size or age (though beware the dangers of your process being terminated before you get a chance to write the data)

- Consider using larger buffer sizes with BufferedInputStream and BufferedOutputStream, if you can afford the heap space, though the 8KB defaults are not that bad

As a rough model, consider disk I/O to draw ~200mA. The smaller the I/O operations, the more time it takes you to accomplish the work, and hence the less efficient those operations are.

While disk I/O is relatively expensive while it is occurring, most apps are not continuously reading or writing, and therefore the total impact to the battery will not be that bad. Apps that do continuously use the disk — such as music or video players — will consume quite a bit of power.

So, every time you write/read database transactions, write a log to a file or store image/video files, try to batch it and use larger buffers.

Media strategy

When working with media, try not to download full-size resources until explicitly requested. Use thumbnails and previews instead.

Ask about the quality whenever possible. When it’s not possible, make a sensible tradeoff between quality and performance, or make quality changeable depending on current battery and network conditions.

Don't download any large media files when the user is roaming. It won't affect battery life, but it is considered good UX not to waste a lot of data while abroad.

We have already discussed caching, but to reiterate: try to cache media if possible. This will save a lot of battery and metered traffic for your users. Be especially careful when uploading media as uploading is much more power-consuming than downloading as we discovered previously.

For example, if you upload a photo in a chat app, there is no need to upload the same photo to different chats again and again. Your client should send only an upload ID to the backend if this photo has been uploaded before.

If media files are repetitive in your app, try calculating a hash of the file and checking if your backend has already received the file with the same hash before.

If you need to download many files, try delegating it to a system service, like DownloadManager in Android. It won't track battery drain for these downloads to your app; at least it worked this way previously.

Push notifications

Try to leverage push notifications rather than using scheduled backend polling, or combine these approaches.

Push notifications can contain a payload. It is limited, but it is sufficient to send IDs to make a decision whether the app should urgently fetch any updates or not.

There is a different use case when you receive push notifications, but do not show them as a UI popup. Instead, you schedule some tasks and update the needed data when the user starts the app again. It is like a bi-directional data transfer; it is a bit less reliable but very power efficient.

Consider using Firebase Cloud Messaging (FCM) to cover both iOS and Android platforms. It works quite well and simplifies the process on both the client and server sides.

You can use high-priority FCM pushes in Android in case you need to deliver some data to the user as soon as possible. These notifications will be delivered to the destination device more quickly than regular notifications, which may wait for a scheduled maintenance window. We will discuss this further in the Android section.

The only thing to take into account with notifications is that they could be blocked by the user, and you will not receive any data. There are special APIs to check this, such as areNotificationsEnabled() added in Android API 24 or checking notification channel importance in later versions.

Background restrictions

iOS and Android have different restrictions for background activity, but a rule of a thumb for any app activity is:

- app is visible on the screen – everything is allowed

- app was visible recently, now in background with Wi-Fi connection – everything is allowed, heavy not urgent operations should be scheduled when connected to a charger

- app was visible recently, now in background with GSM connection – only important work is allowed, everything else should be scheduled for later

- app is in background for a long time – schedule work for later

We will discuss OS restrictions a bit later.

Don’t forget to force disconnect for open sockets and WebSockets if the user moves your app to the background and doesn’t use it for some time. It will save some power and won’t affect your battery report.

Wakelocks

If your app uses system wakelocks with FLAG_KEEP_SCREEN_ON, try to release them if there is no activity from the user in order not to drain the battery.

For example, you acquire a “keep screen on” window flag for the displayed article, but the user leaves the device on the table and doesn't use it for some time. Set a timeout and when there are no actions from the user, turn it off to avoid draining.

Power changes

It is not a matter of power efficiency, but rather good manners to respond to the current battery level. If it is almost empty, try to delay all background fetches and schedule tasks for later.

Do you remember the joke about how taxi prices in your taxi app are much higher when your battery level is less than 10%?

There are usually special APIs from the OS. For Android, it's PowerManager.isPowerSaverMode or global broadcast events:

- ACTION_POWER_SAVE_MODE_CHANGED

- BATTERY_LOW

- ACTION_POWER_CONNECTED

Summary

All of these big and small improvements are ways to improve your app's performance, battery life, and user experience. Yes, that requires going the extra mile to add all of these sophisticated improvements, but it’s worth it.

OS

Let's review some important facts about OS and Android in particular that could help us to understand battery consumption even better.

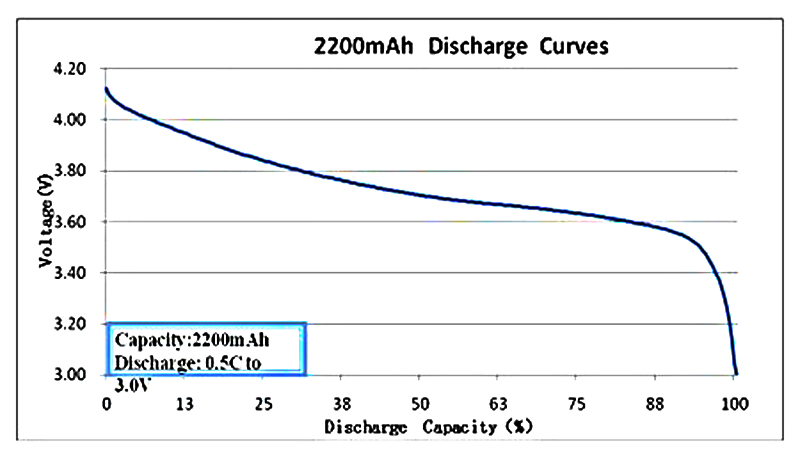

How OS measures battery level

In a smartphone, the concept of battery level is closely related to the factory's battery test statistics. What happens? At the manufacturer's factory, tests are conducted on a specific battery and an etalon graph of discharge is drawn. For example, like this:

Absolutely typical picture, there are no consumer (cheap) Li-ion batteries that would discharge evenly. The Y axis indicates voltage level, and on the X axis is the percentage of charge. Based on this particular graph, made for a specific battery of a particular smartphone, Android OS calculates the charge level that we see on the screen. The information about voltage is provided by a hardware device (battery controller), and further calculations are done by an application of the operating system.

You may notice a rapid discharge with older batteries. Batteries may be deteriorating, but the level can be determined by referencing the manufacturer's chart.

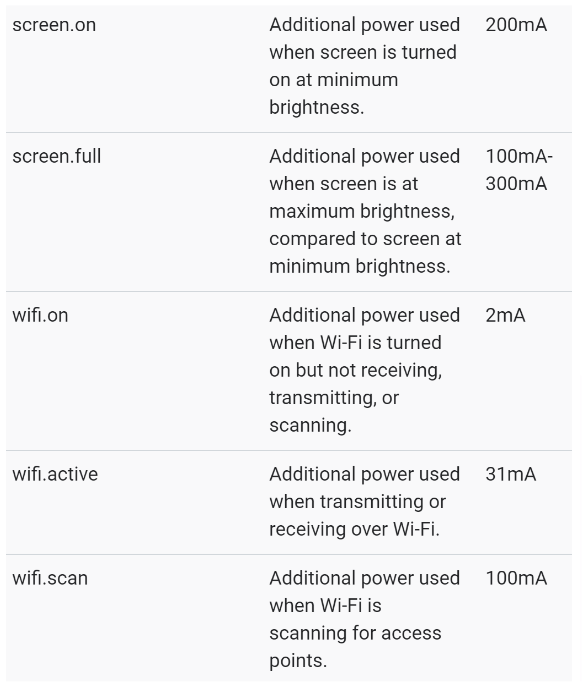

How OS measures battery consumption by application

Measuring how much power an app uses surprisingly turns out to be a hard problem. In fact, it’s not possible to get an exact measurement of an app’s battery consumption. You might now be thinking “but how does my device tell me that app X drains Y% of my battery in my device settings?”.

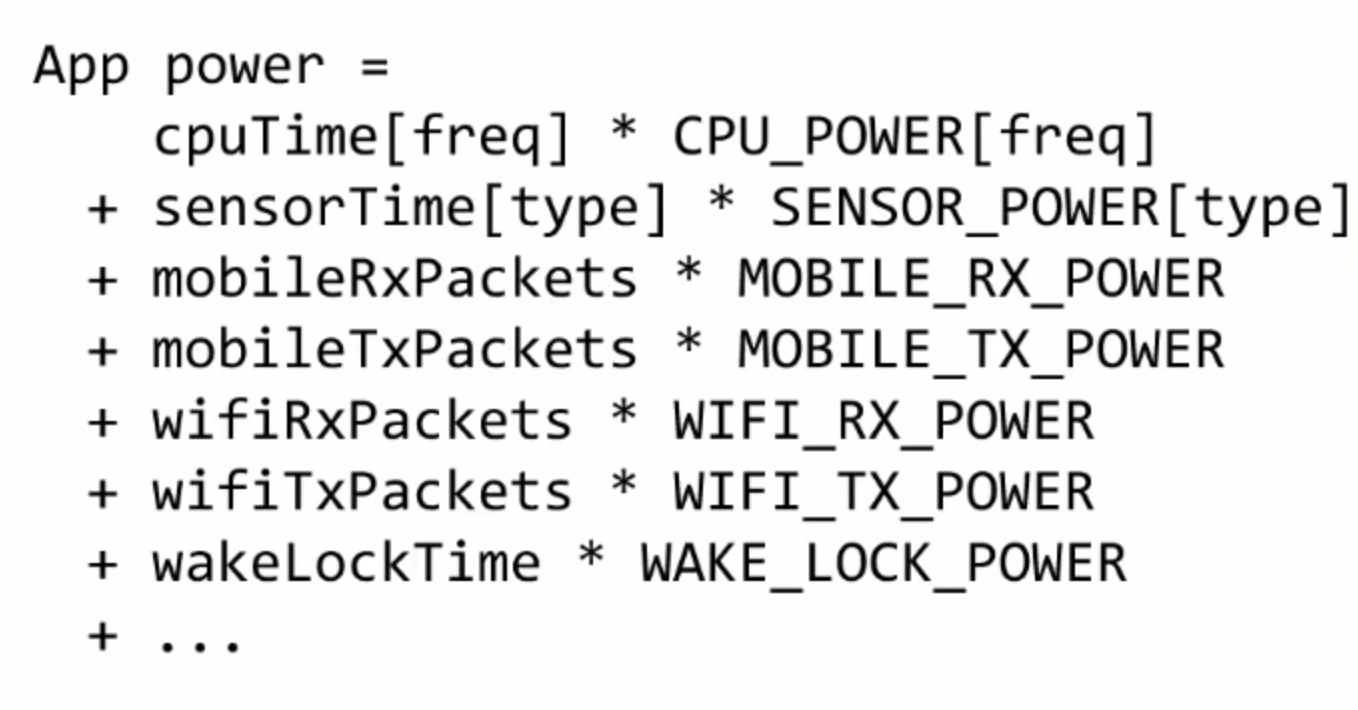

The answer is simple, the system keeps a log of how long an app uses the WiFi, mobile radio, GPS, screen etc. and uses these data points to approximate how much battery consumption that usage amounts to. If you want to learn how this works in detail you can take a look at the BatteryStats implementation in AOSP here (warning: it’s 15k loc). The good thing is that this functionality is exposed to the developer through adb with the Batterystats tool, which means that we can make our lives a lot easier and don’t have to make those measurements ourselves.

The process is described in more detail here. In practice, every smartphone manufacturer provides the OS with a power profile for each hardware part. These numbers are used by the OS to calculate everything.

How to measure battery consumption for your app

There are several different approaches to measuring consumption for your app.

Logging

Logging won't help you measure the exact battery impact you made, but it is still very useful to write detailed application logs to a file. Usually in a test or development environment only. QA and developers can share their logs for further analysis in case of any suspected trouble.

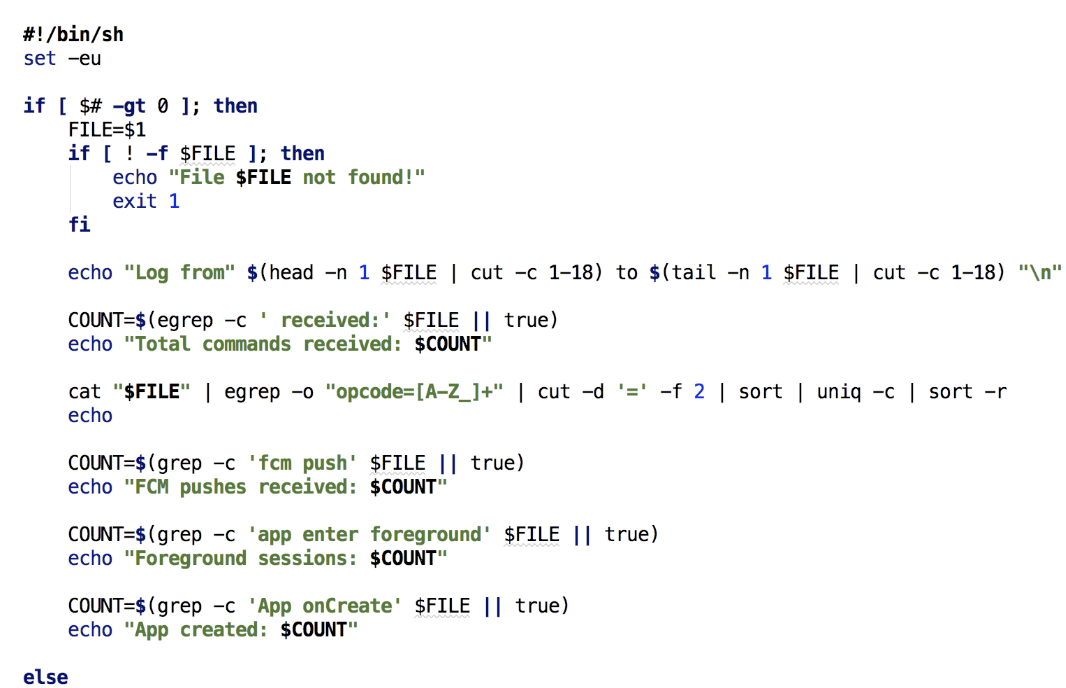

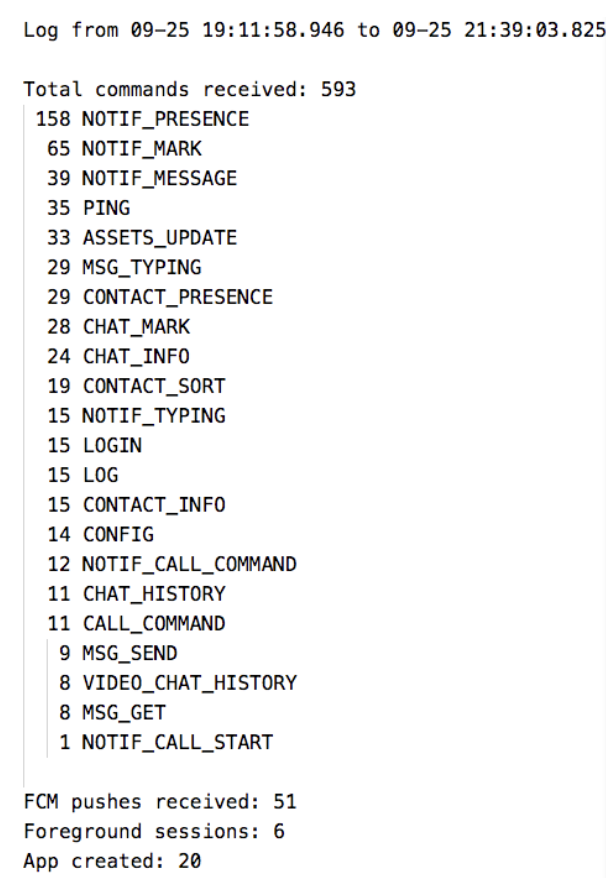

For the battery-oriented analysis, we usually track the daily app usage and then run a simple bash script to aggregate the data.

Later, we can find out how many:

- network requests were made, their frequency and count by type

- how many intensive tasks were scheduled and processed

- check for any abnormalities

Here is a sample of all daily network requests of a chat application:

Manual Batterystats dumps analysis

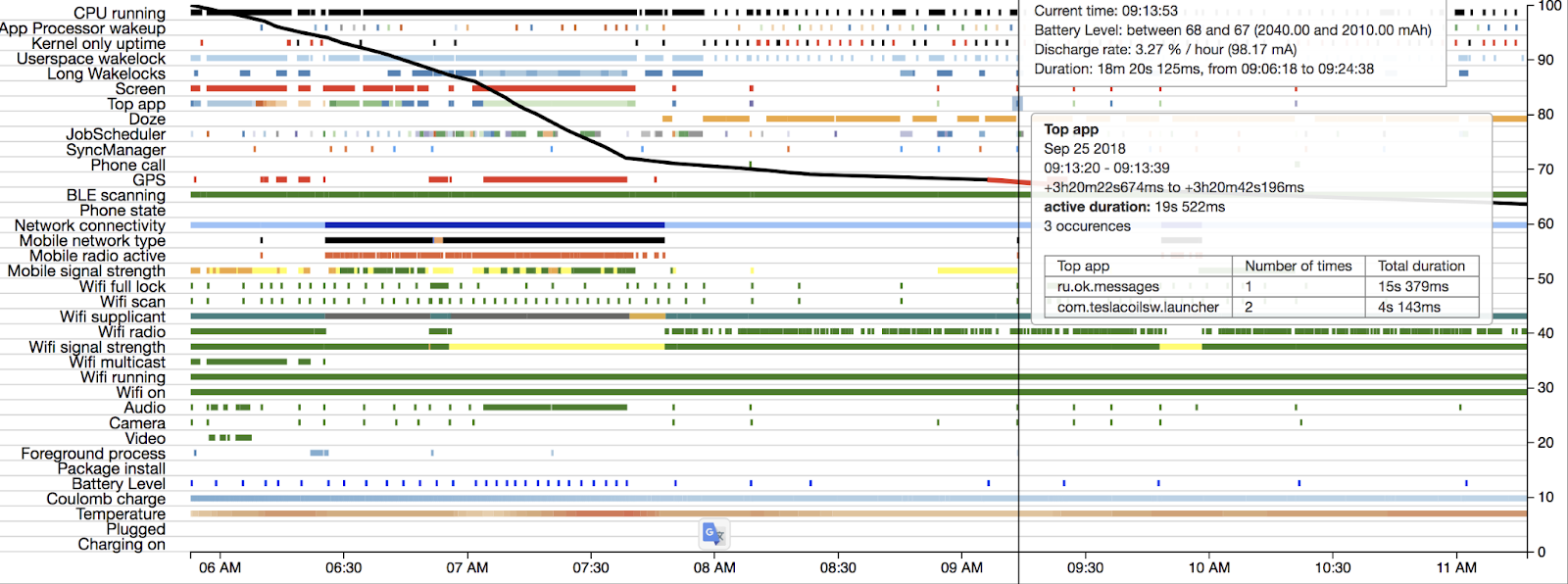

The Android development tools, specifically adb, come with useful debugging tools that we can use to get detailed system information and to dump system logs. One of those tools is the batterystats tool which records system events and uses the previously mentioned BatteryStats model to compute an approximation of each app’s battery usage.

Usually people start their app's battery impact with a simpler UI tool – Battery Historian. But there is a lot more information hidden in the dumped batterystats log files than Battery Historian can offer. This allows you to get detailed information on things like network usage as well as battery usage breakdown by device component (e.g. WiFi radio, CPU, Bluetooth, sensors etc.). The implementation of this was straightforward since you just need to issue a few adb commands to the phone and then use some regular expressions to extract relevant data from the log (just don’t forget to call “adb shell dumpsys battery unplug” when you execute your battery usage flow).

Battery Historian tool

One of the main tools used to analyze battery consumption details in Android is the Battery Historian tool. This tool takes dumps from the batterystats tool and can parse it into a fancy chart.

For example, in the screenshot above, you can see more intensive battery drain from 7 AM to 8 AM. This was due to me driving to the office with a turned-on GPS (red line next to GPS row). Additionally, you can see what your phone and app were doing and when. This could be really useful for finding and improving certain cases.

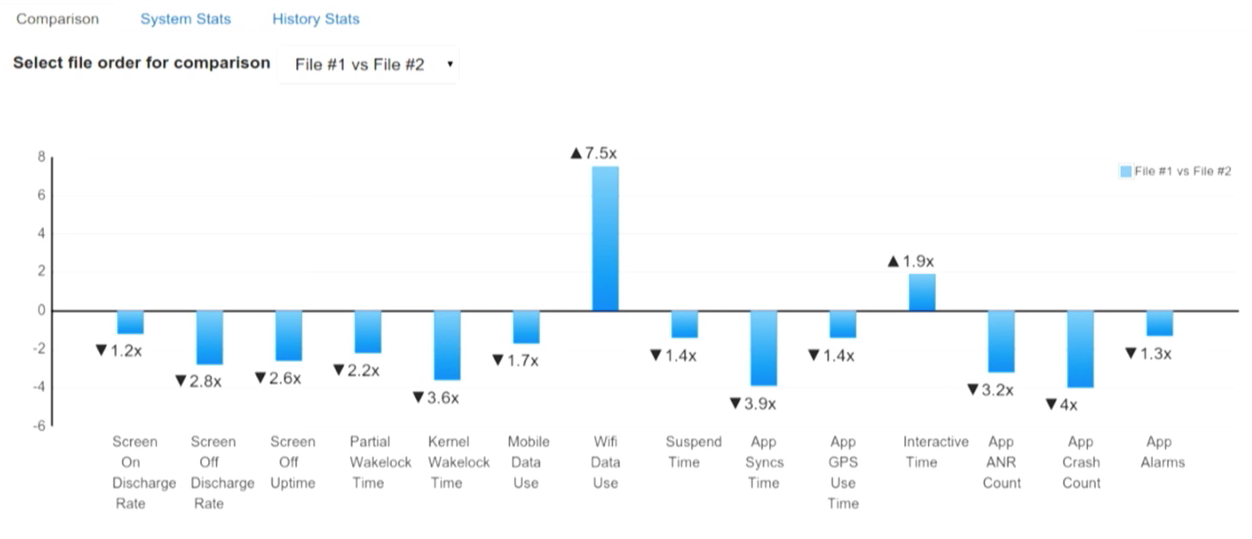

There is one more useful feature worth mentioning: bugreport comparison. You can run a user flow, dump the batterystats log, and store it. Then, you can improve something in the code that could affect battery life and dump the log again. When you have two logs, you can compare them to see the difference.

Android battery optimizations history

Operating system developers care a lot about user experience, performance, and battery health. That is why they come up with different restrictions for developers; though we usually don't like them as we have to adapt to new APIs, it is ultimately for our own good and helps ensure that our smartphones last at least until the end of the day.

Some time ago, there was a trick to avoid following new requirements and APIs by leaving the old target SDK for your app. However, this was restricted several years ago. Now you have to maintain a fresh target sdk to be able to push updates to the Play store. More details about the current state here.

We will review all the important changes since Android L for several reasons. The most important one is that modern libraries do not support API levels lower than API 21 (L). Additionally, before Android L, OS developers were occupied with essentials and with a race to catch up iOS features, and as a result had no time to pay much attention to power consumption improvements. But starting with L, there were many very important ones.

You can view a convenient table with all Android versions and links here.

Android 5 (L) / API 21-22

Google announced an initiative called Project Volta to address issues with battery drain in earlier versions.

Important developer tools, such as Batterystats and Battery Historian, were added. We will discuss these tools in more detail later.

JobScheduler API was added. It lets you optimize battery life by defining jobs for the system to run asynchronously at a later time or under specified conditions (such as when the device is charging or on Wi-Fi).

Android 6 (M) / API 23

This release introduces new power-saving optimizations for idle devices and apps: Doze mode and App Standby.

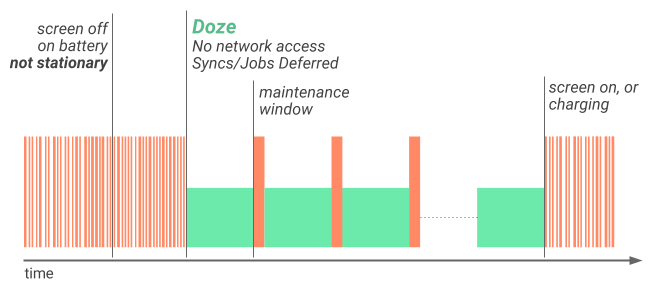

If a user unplugs a device and leaves it stationary with its screen off for a period of time, the device goes into Doze mode where it attempts to keep the system in a sleep state. In this mode, devices periodically resume normal operations for brief periods of time so that app syncing can occur and the system can perform any pending operations.

App Standby allows the system to determine that an app is idle when the user does not touch the app for a certain period of time. If the device is unplugged, the system disables network access and suspends syncs and jobs for the apps it deems idle.

Both of them are very reasonable. Why should you waste resources to update feed or something for the app that wasn’t used for a long time? Or while the phone is on the bed table and not used at all? Maintenance windows are a good opportunity to save some battery.

More information about these optimizations can be found here.

A new type of push notification has been added: FCM High Priority. This is a push that can wake up a device in Doze Mode or App Standby and was meant to be used sparingly, only for critical messages. Unfortunately, many applications have been overusing this feature, sending only high-priority pushes which affects the battery.

Android 7 (N) / API 24-25

After almost a year of collecting Doze mode statistics, Android N has added an improved Doze on-the-go mode. This works even when your phone is in your pocket or bag and can detect idle state and restrict app activities in the background.

As well as background optimizations (also known as Project Svelte) being improved, three implicit broadcasts were removed:

- Connectivity action

- New picture action

- New video action

Too many apps reacted to these events and began performing their tasks even though the user did not need them at that time.

Android 8 (O) / API 26-27

Almost all implicit system broadcasts were removed.

Background execution and background location limits were added.

It is not allowed to run a Service in the background. If an app starts such a service and the user quits the application, it will be killed by the system very soon. If you try to start a service when your application is in the background, the system will throw an exception.

Apps in the background receive location updates less frequently since Android O.

Android 9 (P) / API 28

Android P is one of the biggest updates in terms of power management optimizations.

Despite some core optimization, such as faster file system work, small/big core optimizations, and CPU boosts, OS developers were facing the fact that battery drain is proportional to app count. If we have the same phones and I have 100 apps installed when you have just 10, my battery will be drained faster.

Android 9 makes a number of improvements to battery saver mode. The device manufacturer determines the precise restrictions imposed. For example, on AOSP builds, the system applies the following restrictions:

- Aggressive standby mode instead of waiting for the app to be idle

- Background execution limits apply to all apps

- Location services may be disabled when the screen is off

- Background apps do not have network access

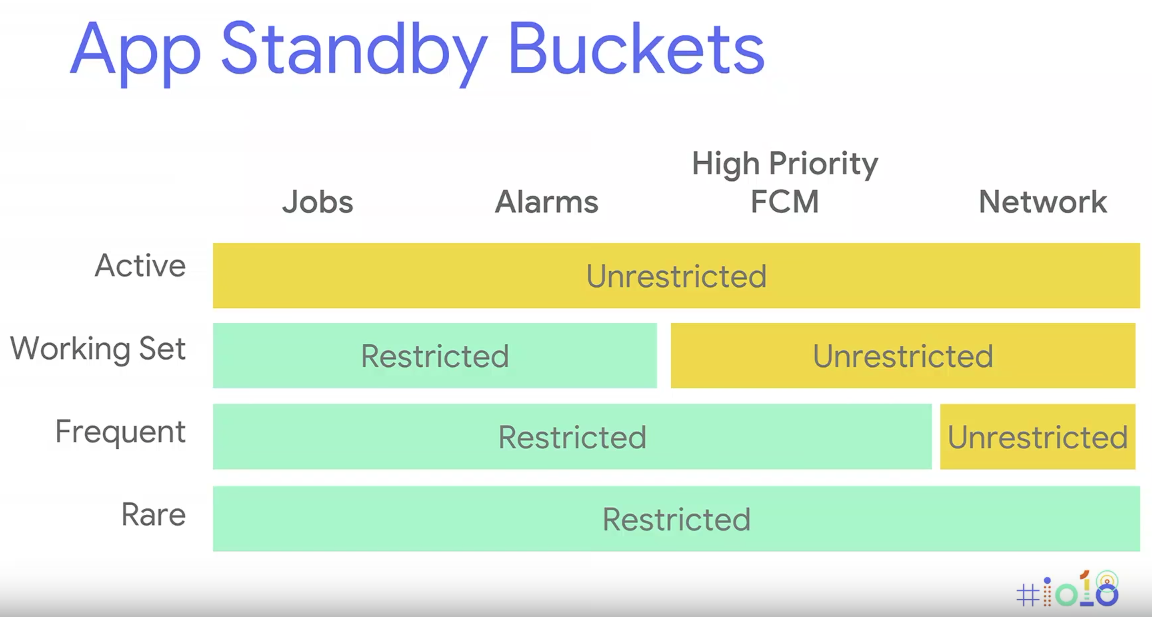

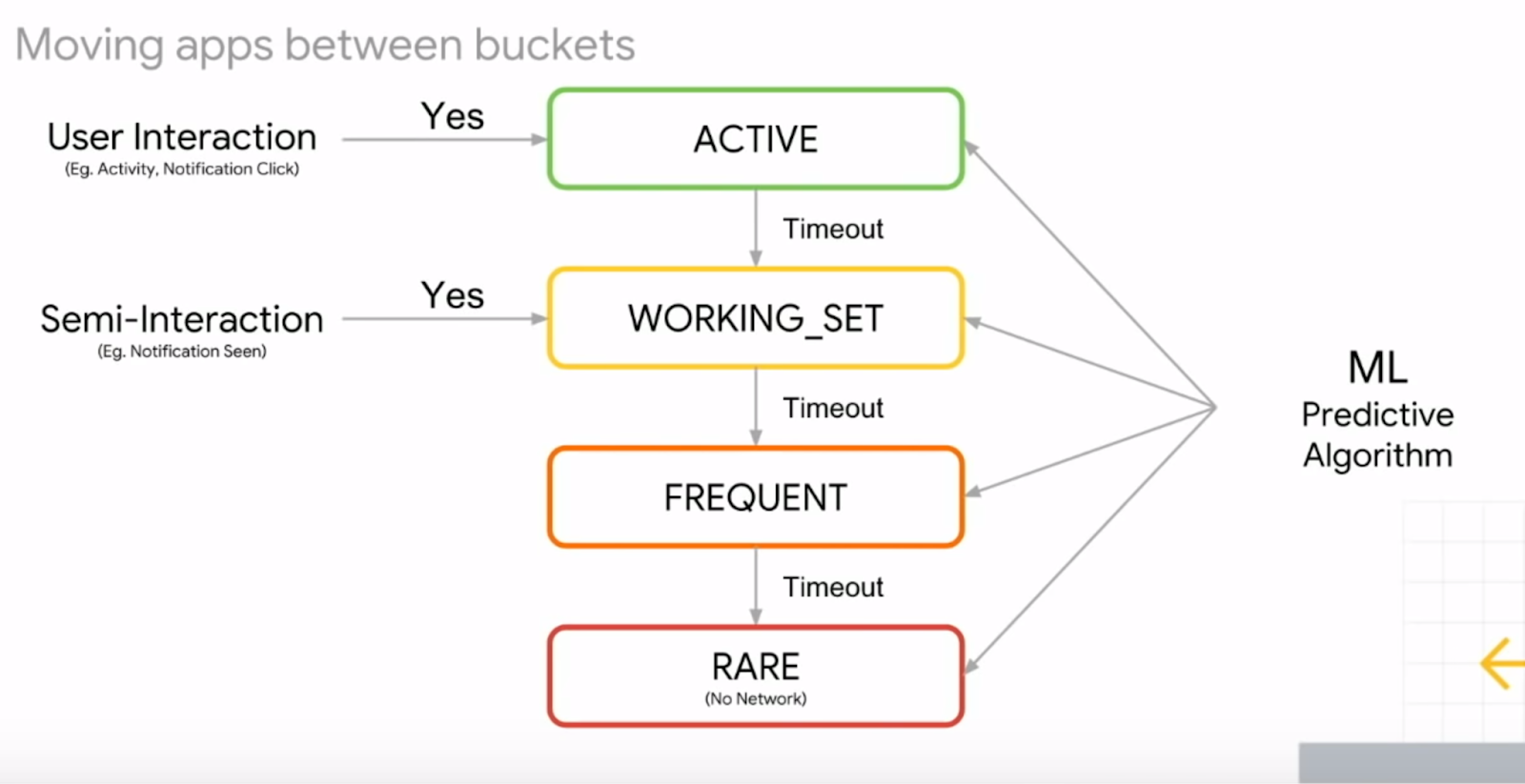

Android 9 introduces a new battery management feature, App Standby Buckets. App Standby Buckets helps the system prioritize apps' requests for resources based on how recently and how frequently the apps are used. Based on the app usage patterns, each app is placed in one of five priority buckets. The system limits the device resources available to each app based on which bucket the app is in.

Funny fact about this picture from Google I/O is that restricted operations showed as a green (positive color) in contrast to unrestricted yellow (warning color).

Adaptive battery is introduced as a ML based feature to predict what app goes to which bucket. For example, every morning you usually open the Reddit app. Knowing this fact based on the stats system will open a maintenance window for this app to let it update everything it needs to update.

More details on power restrictions could be found here.

The applications in the background cannot access the microphone and the camera, the sensors either don’t send any data at all or do it at a slow frequency.

Android 10 / API 29

Dark mode was officially added. As you have read before it could positively affect the battery life.

Access to device location in the background requires permission since Android 10.

A new feature that allows the system to understand why a Foreground Service is needed and to prioritize it. There are different reasons for a service: camera, connected device, data sync, location, media playback, media projection, microphone, phone call.

Previously, if a push came, it was possible to start an Activity from the Broadcast Receiver. However, this is no longer possible; any display of UI must be initiated by the user. For example, a notification can be shown. If the user clicks on it, we get permission to run the Activity; if they do not click on it, there will be an exception.

Some restrictions were added to Wi-Fi and Bluetooth network scans.

Android 11 / API 30

Not many important changes were noticed. One-time permissions were added that could be helpful for battery life; for example, one-time location permission.

Android 12 / API 31-32

Bandwidth estimation improvements - better estimations for current connection across all applications. This API helps to get current connection speed to make some important decisions for the networking.

Applications in the background can’t run the Foreground Service unless there are a few exceptions. Consider using WorkManager to schedule and start expedited work while your app runs in the background. To complete time-sensitive actions that the user requests, start foreground services within an exact alarm.

Exact Alarm permission was added to save battery and not disturb Doze mode. It is not a runtime permission, but an interesting feature like Picture-in-Picture. Therefore, we must inform the user to go to the system settings and allow our application to do this.

A new Restricted App Standby bucket was added. This bucket has the highest restrictions. The system takes into account your app's behavior, such as how often the user interacts with it, to decide whether to place your app in the restricted bucket. For example, if the user does not interact with the app for 45 days in Android 12 (Android 13 reduces this number to 8 days); or if your app consumes a great deal of system resources, or may exhibit undesirable behavior. More details on the bucket can be found here.

Android 13 / API 33

Users now can complete a workflow from the notification drawer to stop apps that have ongoing foreground services. This affordance is also known as the Task Manager. Apps must be able to handle this user-initiated stopping.

In Android 13 and higher, the system tries to determine the next time an app will be launched, and uses that estimation to run prefetch jobs. Apps should try to use prefetch jobs with JobScheduler (WorkManager) for any work that they want to be done prior to the next app launch.

High Priority Firebase Cloud Message (FCM) Quotas were added. App Standby Buckets no longer determine how many high priority FCMs an app can use. High priority FCM quotas scale in proportion to the number of notifications shown to the user in response to High Priority FCMs. If your application doesn't always post notifications in response to High Priority FCMs, it is recommended that you change the priority of these FCMs to normal so that the messages that result in a notification don't get downgraded.

Android 14 / API 34

Schedule exact alarms are denied by default. It was pre-granted to most newly installed apps, but now you need to ask for permission.

Conclusion

Thank you for reading this rather large article. Along the way, we:

- Reviewed possible battery drain sources

- Looked at hardware consumption baselines

- Discussed display features

- Reviewed possible passive (protocol, data format) and active (code improvements) optimizations

- Mentioned approaches to measure it yourself, and reviewed battery improvements history in Android

I hope all this information provides some explanation as to how things work and gives you ideas on how to improve your app.

Please contact me if you notice any mistakes or have any suggestions.

References

An Analysis of Power Consumption in a Smartphone, A. Carroll and G. Heiser, https://www.usenix.org/legacy/events/usenix10/tech/full_papers/Carroll.pdf

The Systems Hacker’s Guide to the Galaxy Energy Usage in a Modern Smartphone, A. Carroll and G. Heiser, https://trustworthy.systems/publications/nicta_full_text/7044.pdf

How is Energy Consumed in Smartphone Display Applications, X. Chen, Y. Chen, Z. Ma, Felix Fernandes, http://www.hotmobile.org/2013/papers/full/17.pdf

The Busy Coder’s Guide to Android Development, Mark Murphy, https://commonsware.com/Android/

Does 4G Use More Battery Power Than 3G? Nicholas Jones, https://www.weboost.com/blog/does-4g-use-more-battery-power-than-3g

Measuring Device Power, Android docs, https://source.android.com/devices/tech/power/device

Battery capacity in mAh и Wh, Alexey Nadezhin, https://ammo1.livejournal.com/585236.html

How Uber Engineering Evaluated JSON Encoding and Compression Algorithms to Put the Squeeze on Trip Data, Kåre Kjelstrøm, https://www.uber.com/en-GB/blog/trip-data-squeeze-json-encoding-compression/

What you need to know about dark themes and battery savings, Ara Wagoner, https://www.androidcentral.com/dark-themes-norm-not-exception

Discussion “AMOLED: Black wallpaper = Battery saving (experiment result)”, https://forum.xda-developers.com/showthread.php?t=660853

Fused Location Provider API, https://developers.google.com/location-context/fused-location-provider/

Request batching measurements, Dmitry Melnikov https://github.com/melnikovdv/battery-drain

Google's (Anti)Trust Issues, Mark Murphy, https://commonsware.com/blog/2015/11/11/google-anti-trust-issues.html

Collection of stock apps and mechanisms, which might affect background tasks and scheduled alarms, https://github.com/dirkam/backgroundable-android

TLS Session Resumption: Full-speed and Secure, Zi Lin, https://blog.cloudflare.com/tls-session-resumption-full-speed-and-secure/

An overview of the SSL/TLS handshake, IBM MQ docs, https://ibm.com/support/knowledgecenter/en/SSFKSJ_9.0.0/com.ibm.mq.sec.doc/q009930_.htm

Keynote, Google I/O 2018, https://www.youtube.com/watch?v=ogfYd705cRs

Don't let your app drain your users' battery, Google I/O 2018, https://www.youtube.com/watch?v=kGWT99eMgyM

Android battery and memory optimizations, Google I/O 2016, https://www.youtube.com/watch?v=VC2Hlb22mZM

Cost of a pixel color (Android Dev Summit '18), https://www.youtube.com/watch?v=N_6sPd0Jd3g&t=183s

Android P, Power management docs https://developer.android.com/about/versions/pie/power

Power management restrictions, Android guide, https://developer.android.com/topic/performance/power/power-details

Optimize for battery life, Android guide https://developer.android.com/topic/performance/power

LZ4 Compression Library, Zhe Zhang, https://indico.cern.ch/event/631498/contributions/2553033/attachments/1443750/2223643/zlibvslz4presentation.pdf

Improving Battery Life with Restrictions, Android Dev Summit '18, https://www.youtube.com/watch?v=-7eZL3XRqas

Android AppUsageStatistics Sample, https://github.com/googlesamples/android-AppUsageStatistics

Location & Battery Drain, Android Performance Patterns Season 3 episode 7, https://www.youtube.com/watch?v=81W61JA6YHw

Dark Mode vs. Light Mode: Which Is Better?, Nielsen Norman Group, https://www.nngroup.com/articles/dark-mode/

Android Battery Testing at Microsoft YourPhone, Aaron Oertel, https://medium.com/android-microsoft/android-battery-testing-at-microsoft-yourphone-a1d6068bf09e

Profile battery usage with Batterystats and Battery Historian, Android guide, https://developer.android.com/topic/performance/power/setup-battery-historian

How does the phone measure battery level? Alexander Noskov, http://android.mobile-review.com/articles/65260/

Refresh rate explained: What does 60Hz, 90Hz, or 120Hz mean? Robert Triggs, https://www.androidauthority.com/phone-refresh-rate-90hz-120hz-1086643/

Refresh rate demo https://www.testufo.com/framerates#count=6&background=stars&pps=120

Samsung says its 90Hz OLED is as good as 120Hz LCD, Gizmochina by Jeet, https://www.gizmochina.com/2020/06/29/samsung-claims-90hz-oled-as-good-as-120hz-lcd/

LTPO display tech for Galaxy Note 20+ to be called ‘HOP’, Dominik B., https://www.sammobile.com/news/new-details-on-galaxy-note-20-ltpo-display-tech-revealed/

What Is An LTPO Display, And Is It Better Than An OLED? Jakub Jirak, https://medium.com/codex/what-is-an-ltpo-display-and-is-it-better-than-an-oled-e001cf773da9

Measuring Power Values, Android docs, https://source.android.com/docs/core/power/values

Top 8 video file formats for your website, https://imagekit.io/blog/video-file-formats/

Improving battery life with restrictions (Android Dev Summit '18), https://www.youtube.com/watch?v=-7eZL3XRqas&t=172s

Stay tuned by following us on LinkedIn

Mobile Developer? Sign up for Product Science's mobile performance newsletter

.jpg)